Overview

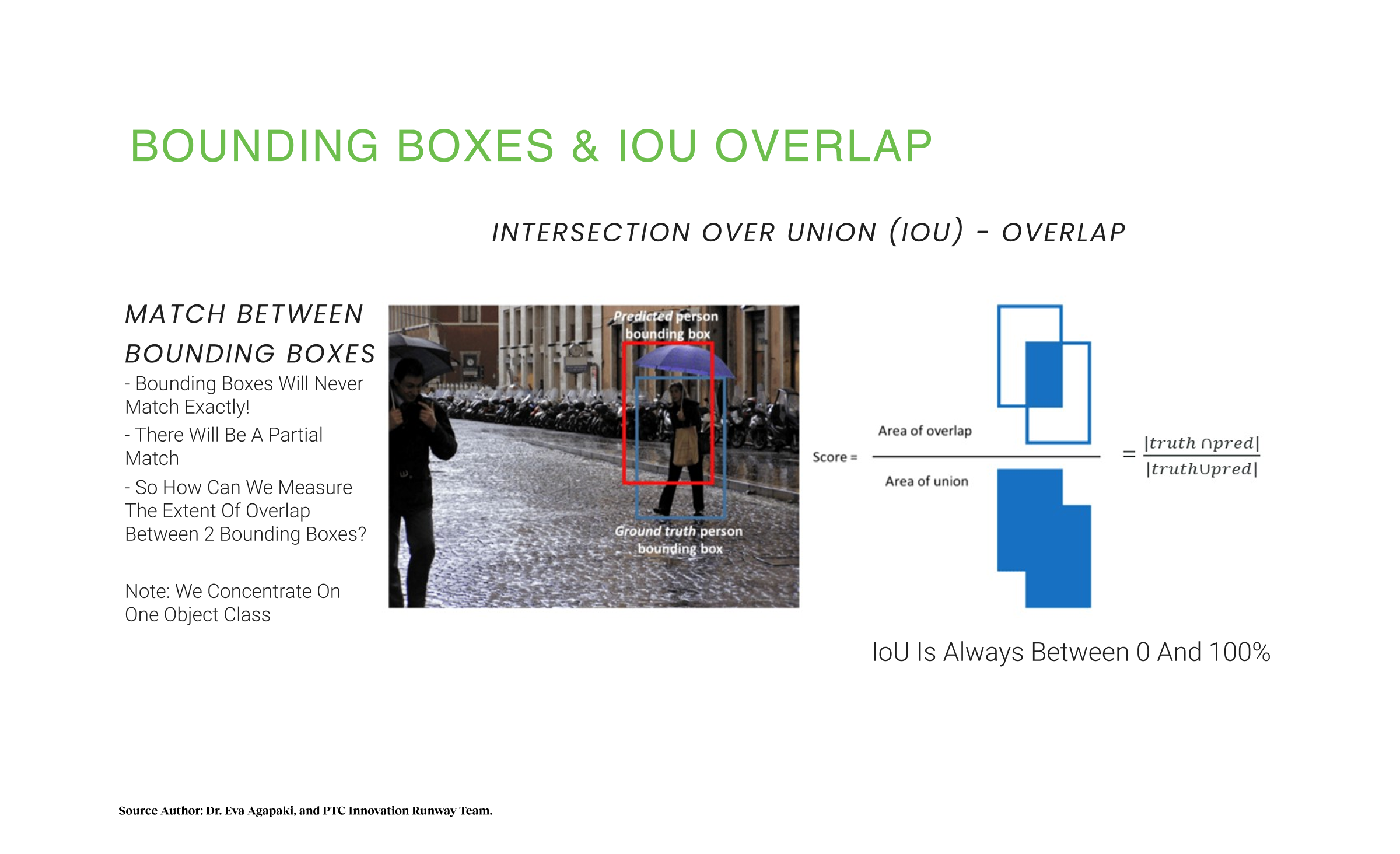

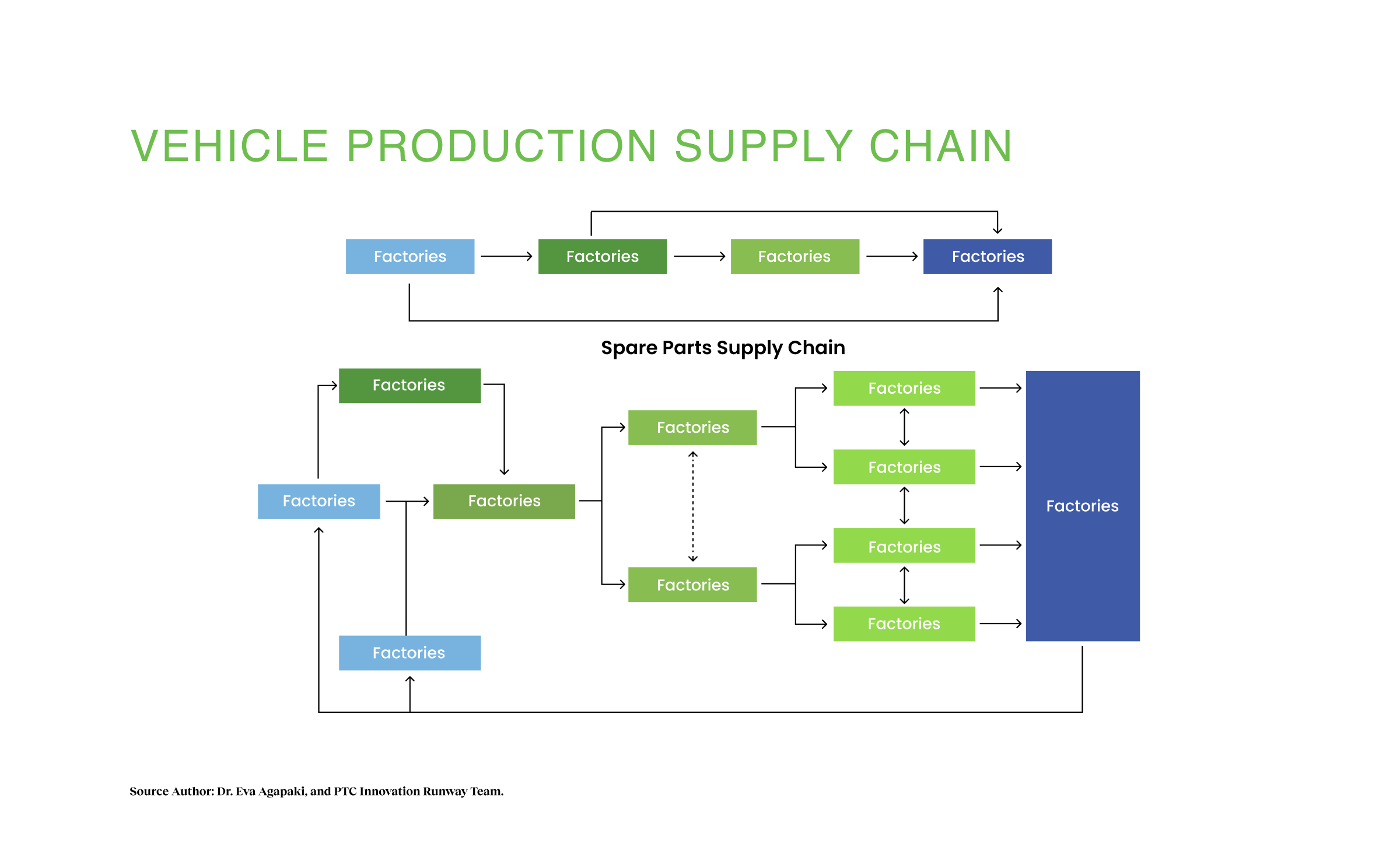

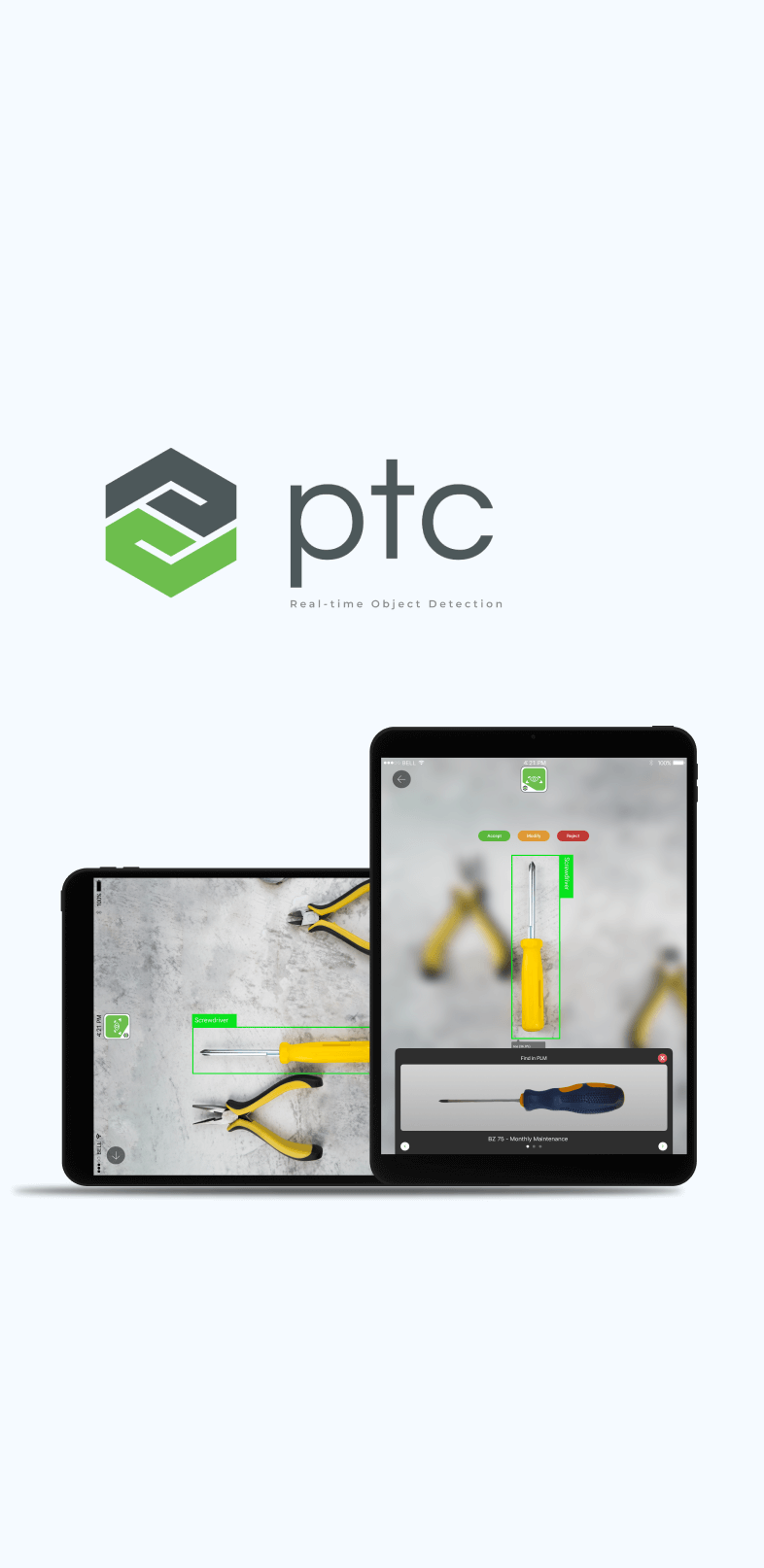

In industrial manufacturing, the gap between a broken machine and the correct replacement part is often a 20-minute search through outdated PDF manuals. PTC’s Innovation Runway team, led by Dr. Eva Agapaki, had developed breakthrough AI research: a pipeline that could train computer vision models using synthetic CAD data instead of thousands of real-world photos. This solved the data scarcity problem, but it created a productization gap. The AI sat in labs for six years, not shipping. The raw output was probabilistic, generating bounding boxes and confidence scores, not definitive answers. For a technician standing in a loud, dimly lit factory wearing safety gloves, a “73% confidence” rating is useless. They need certainty, speed, and zero tolerance for errors that stop production lines.

I was brought in to close this gap. My role was to lead end-to-end product design across Mobile and RealWear platforms, transforming sophisticated research into a commercially viable system. While the research team optimized neural networks, I focused on human factors and ecosystem design: How does a user verify an AI’s guess in extreme constraints? How do we design for safety-critical contexts? How do we turn experimental prototypes into organizational belief?

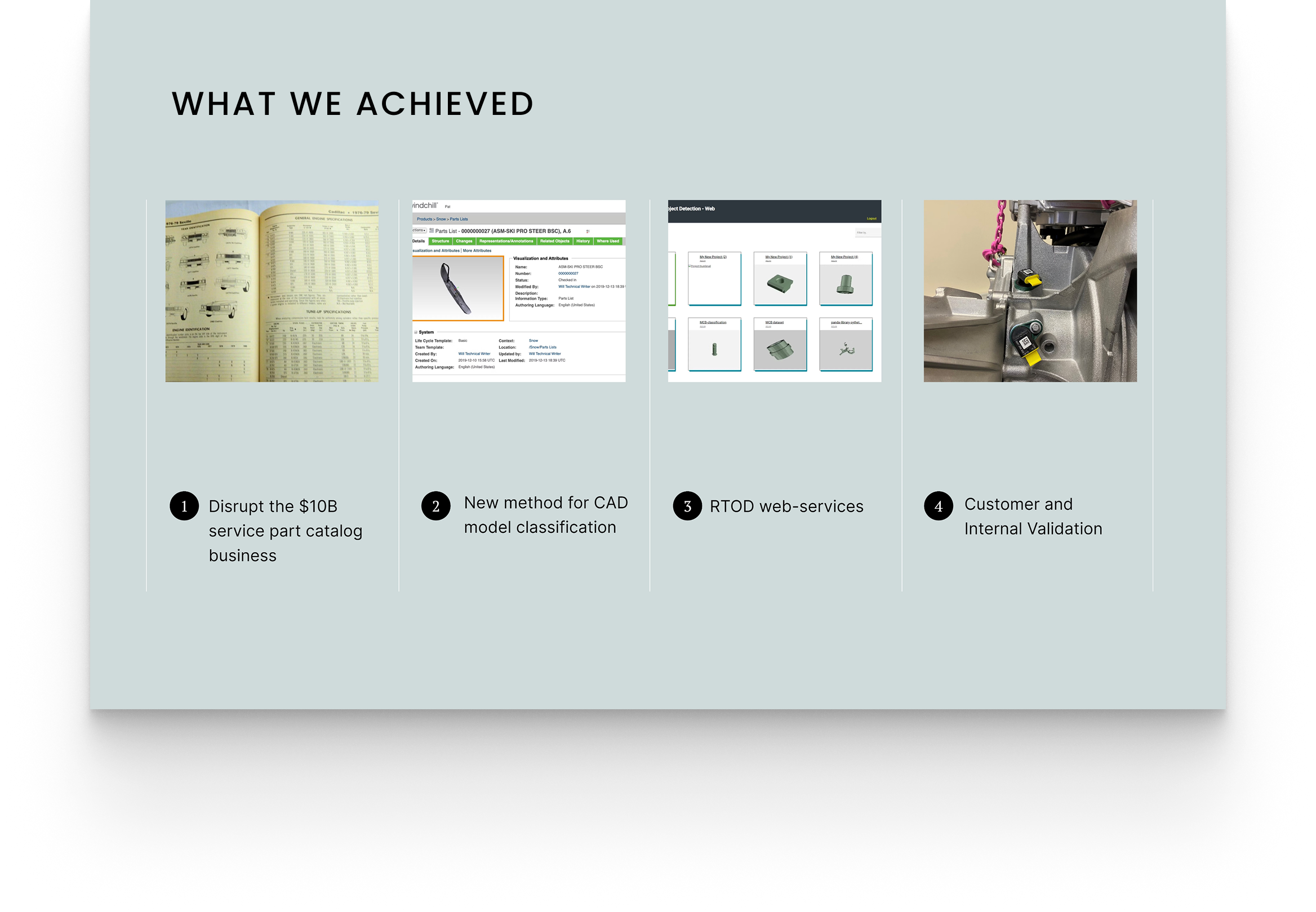

I designed three distinct flows. Classify for frontline technicians, Detect for factory operators, Label & Train for system improvement. Together they form a complete learning loop. We shipped. Porsche, German automotive OEMs, and IKEA converted pilots into extended contracts, unlocking $20M ARR across 30,000 enterprise customers. Design-led innovation became a business pillar. That’s the proof.

Research & Design

Product Design Lead · Strategic Leadership · AI Human Factors · Cross-Platform Architecture · Technical Prototyping · Team Development Tech Stack: Unity, Vuforia Studio, Azure Cognitive Services, TensorFlow, Windchill PLM, RealWear HMT-1

- Duration: 2021-2022

- Partners: PTC, Vuforia, Azure, TensorFlow, Porsche, German Automotive, IKEA

- Team: Fas Lebbie (Design Lead), Dr. Eva Agapaki (AI Research Lead), Deep Learning Engineers, Full-Stack Developers, Product Management, 17-person cross-functional team across 6 countries

WHAT I BROUGHT

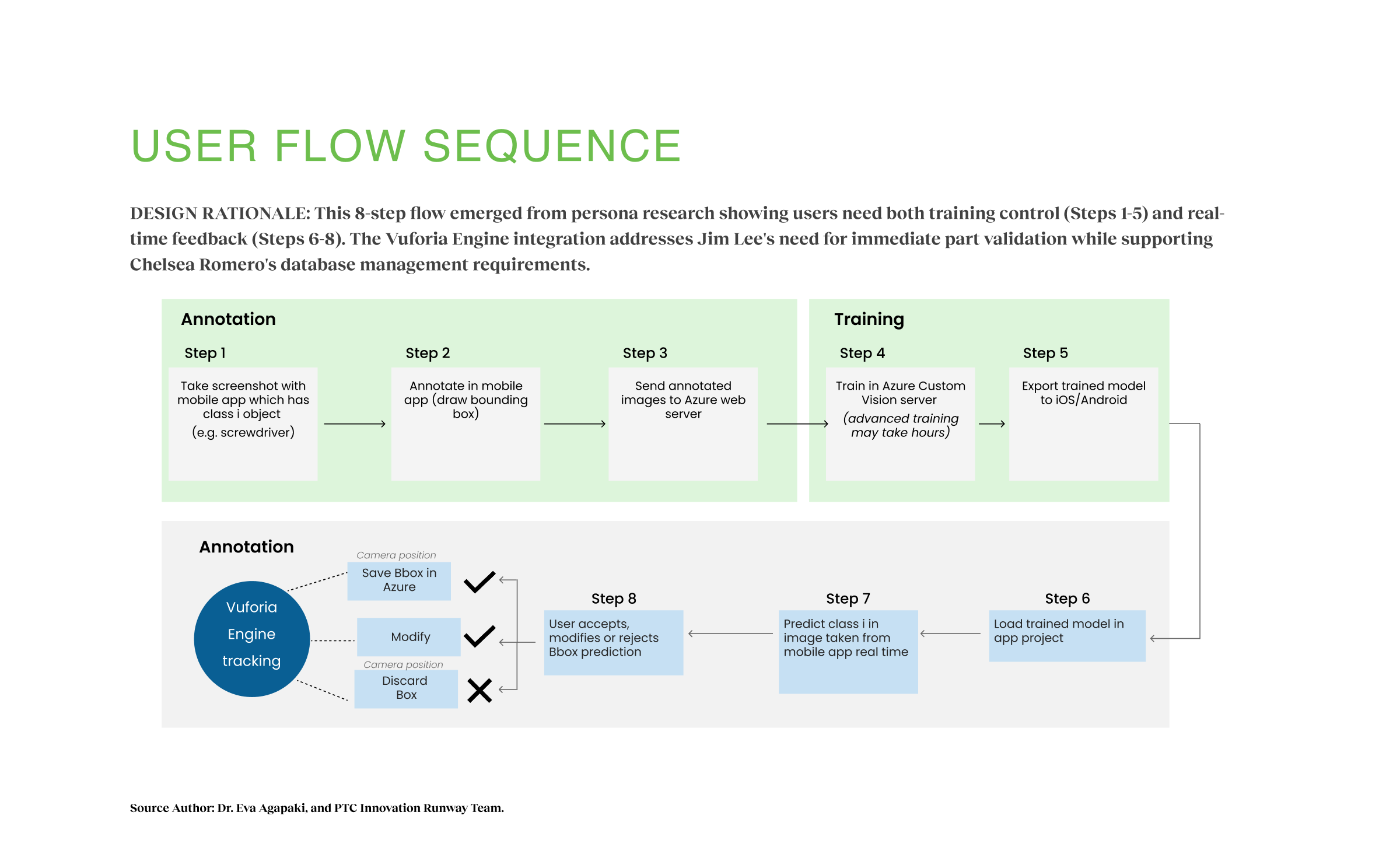

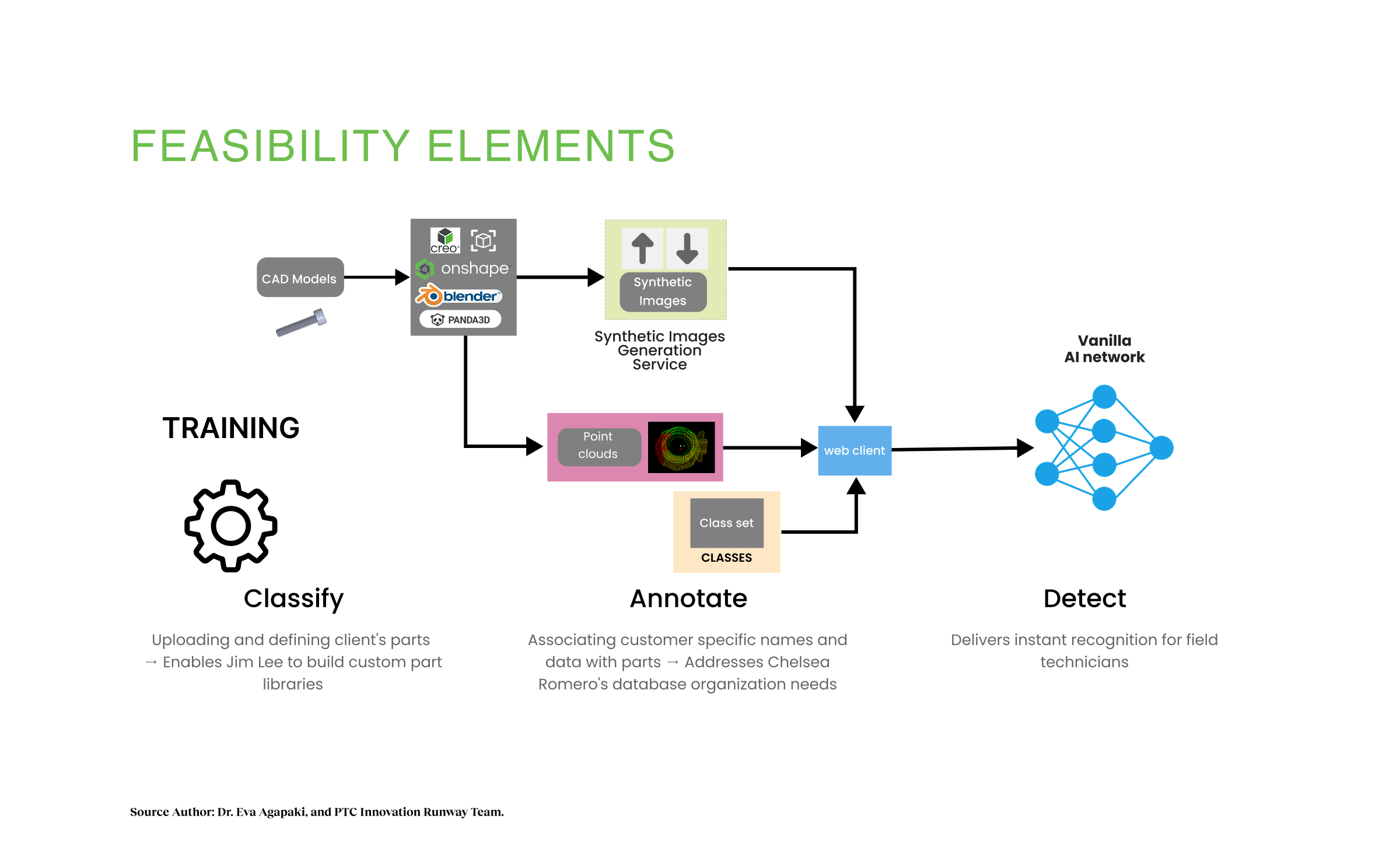

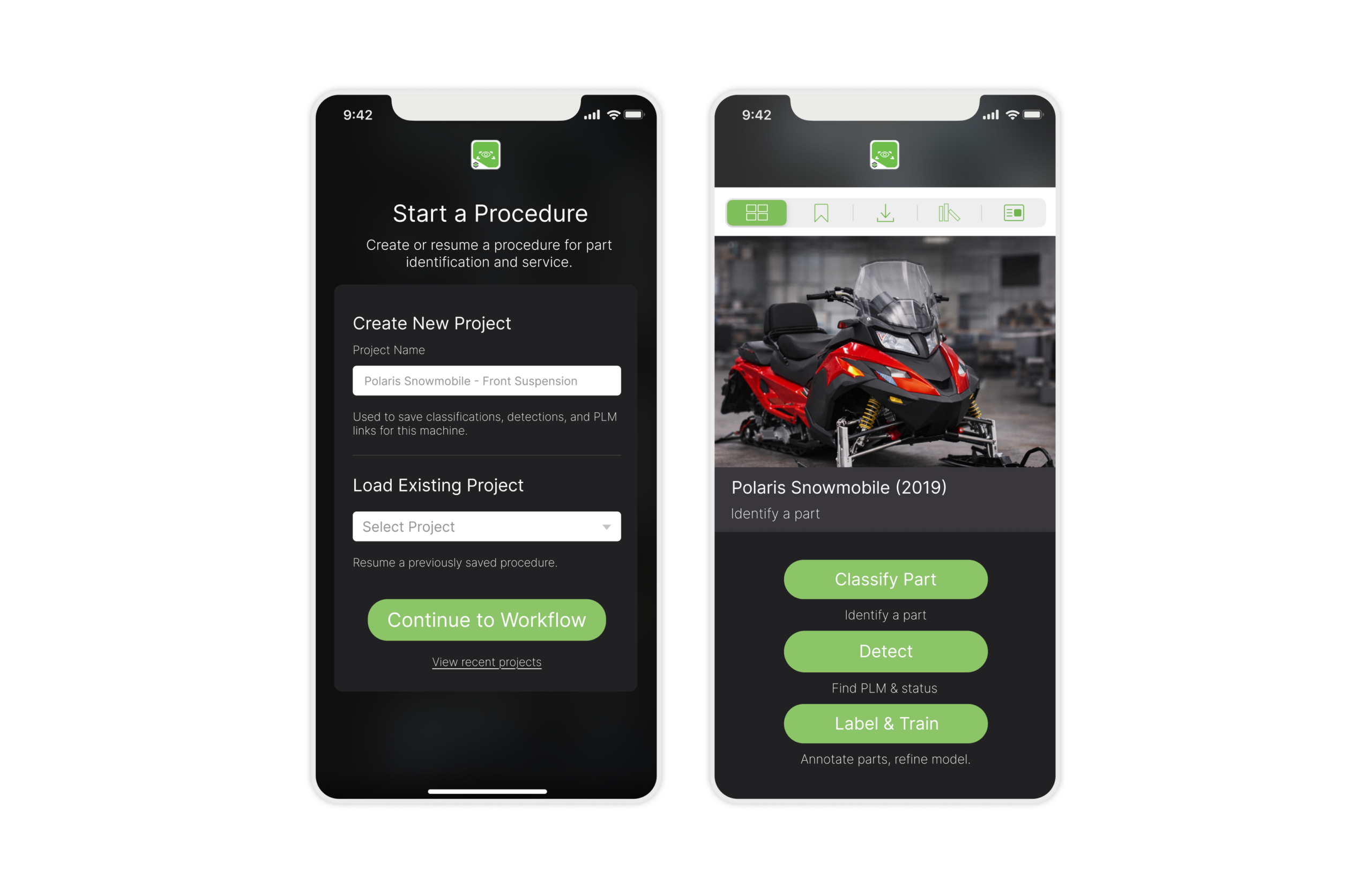

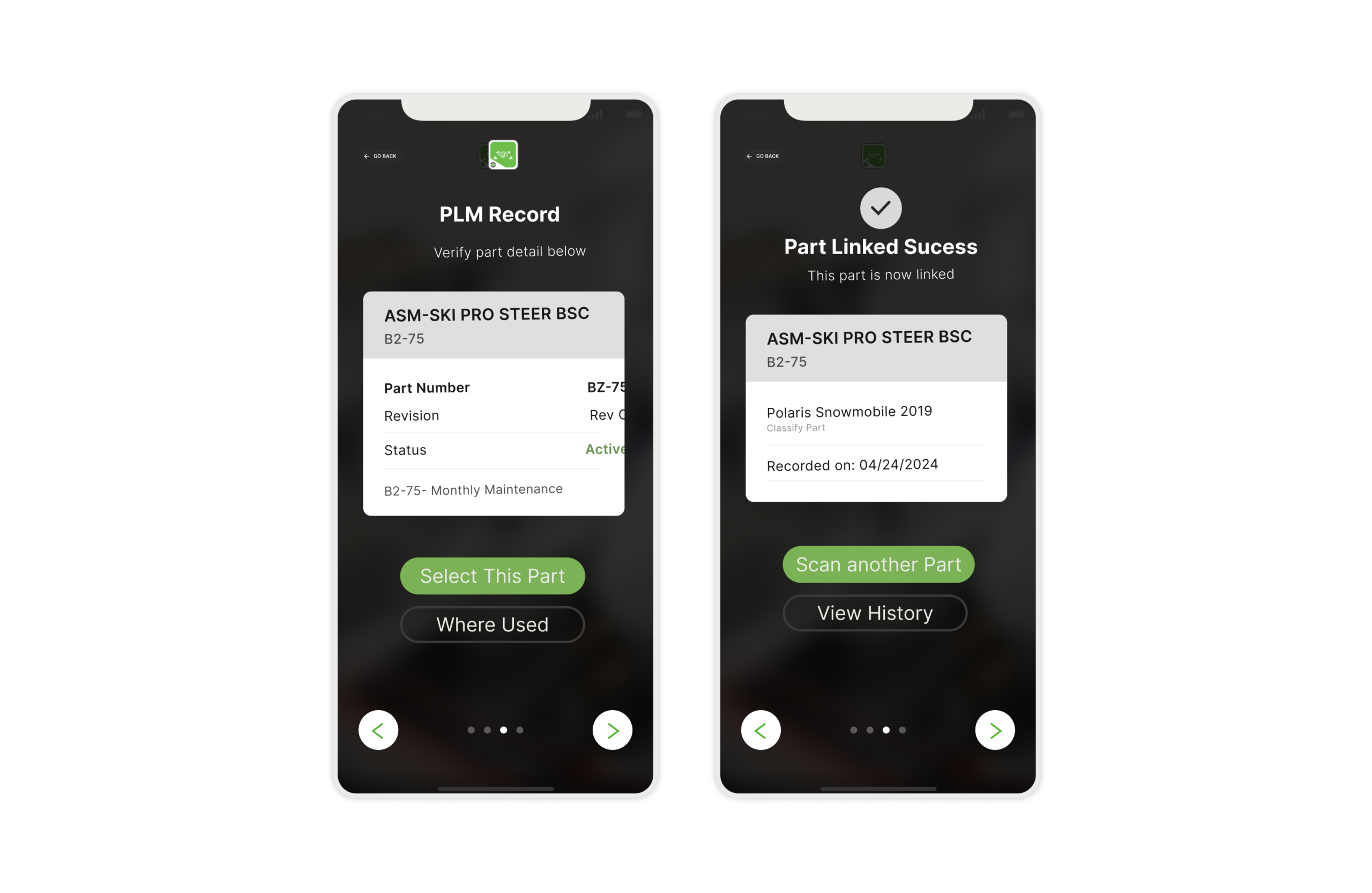

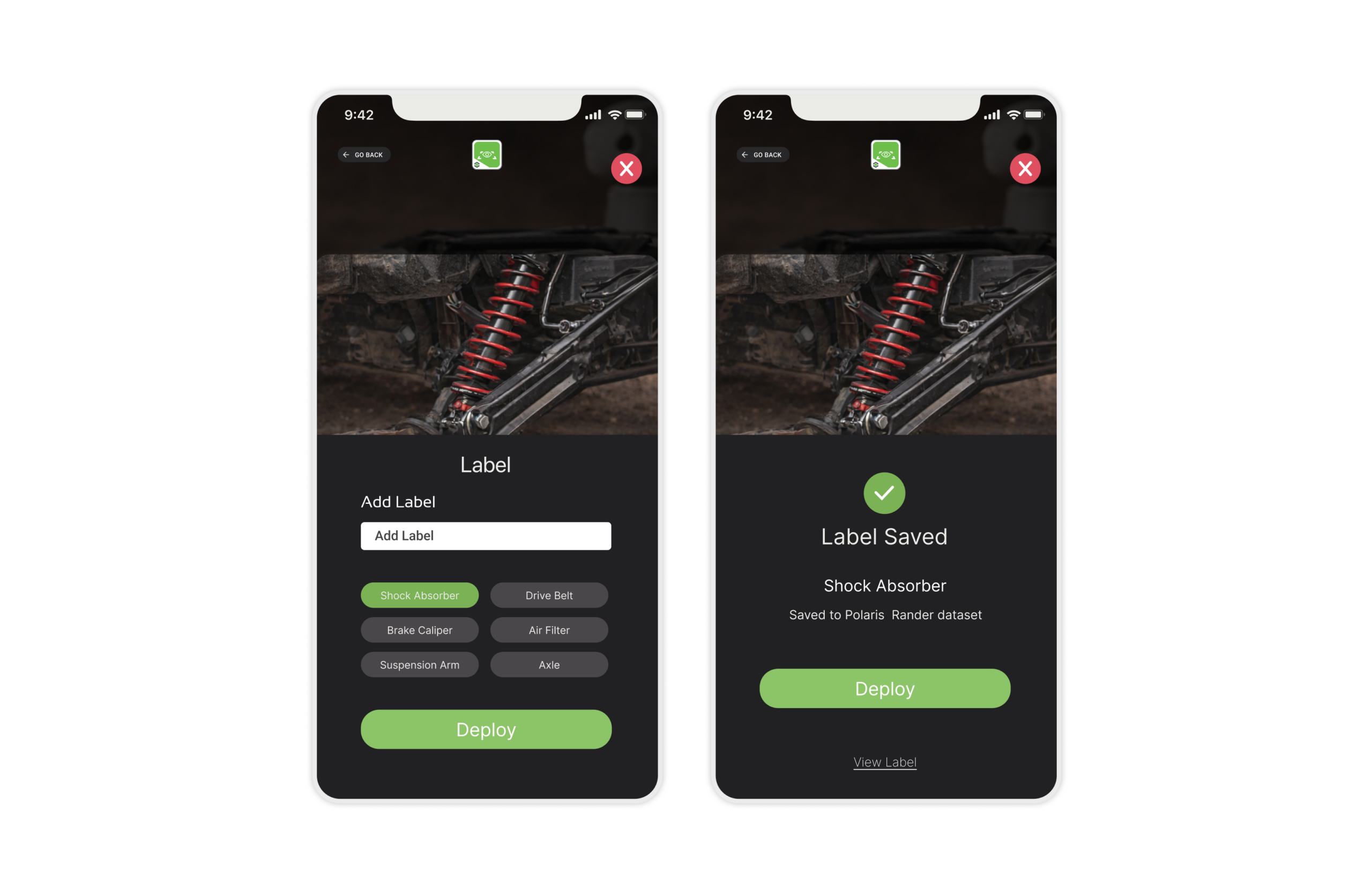

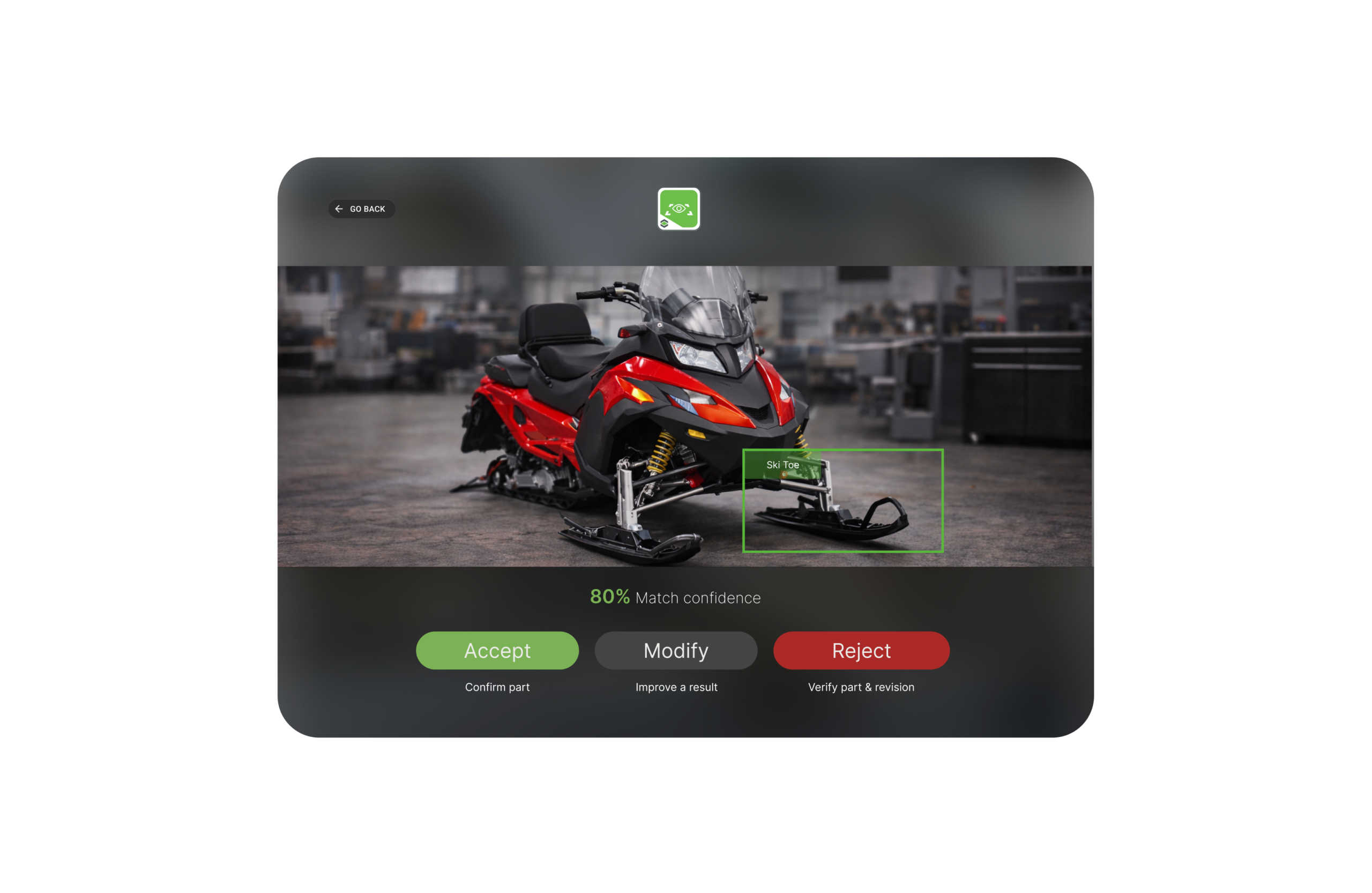

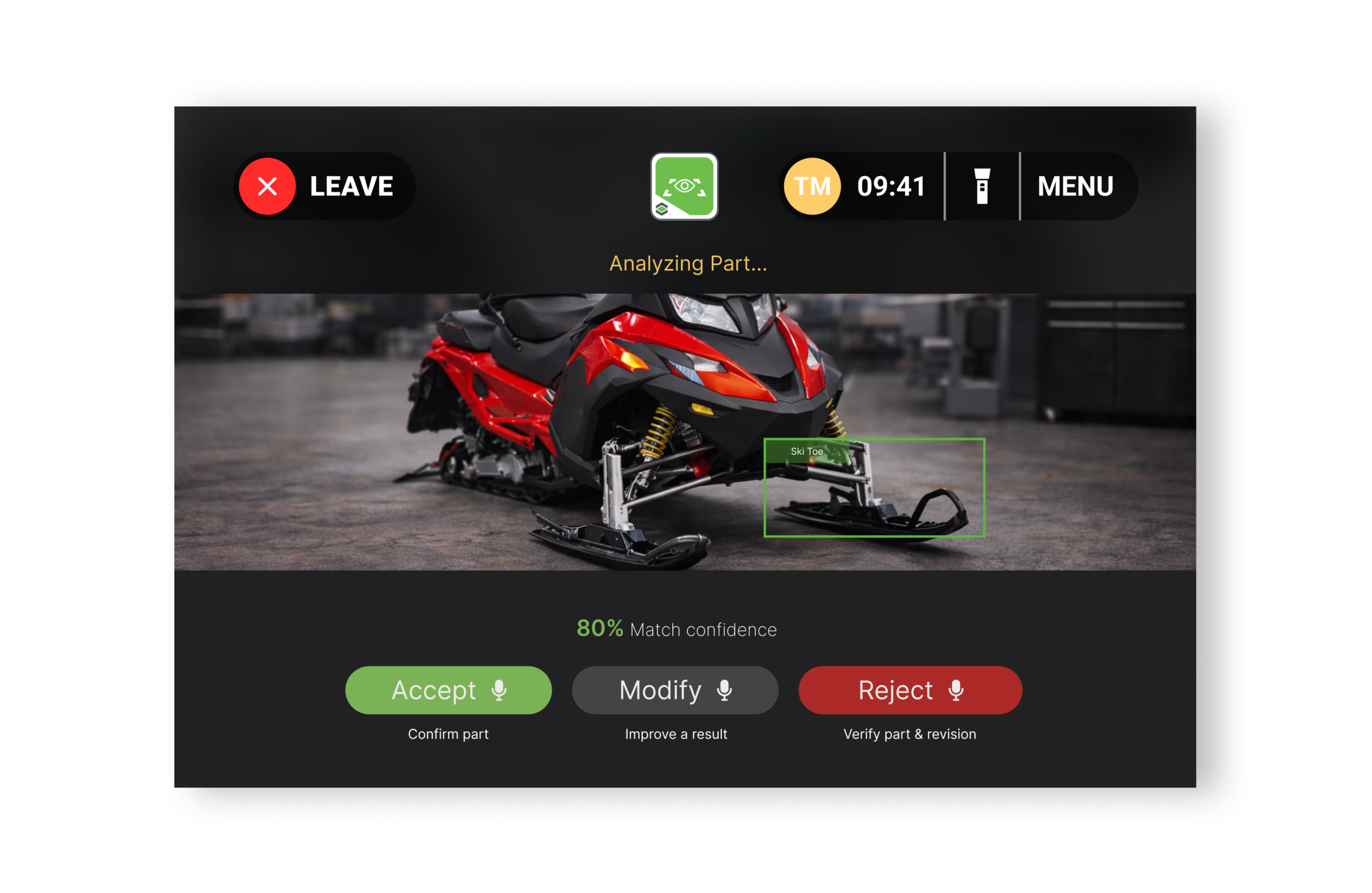

I reframed the product model from "search" (user inputs text) to "verification" (AI proposes, user confirms). I established the "20-200 Part Rule" and designed three distinct flows. Classify for frontline technicians, Detect for factory operators, Label & Train for system improvement. This created a complete learning loop where better data compounds trust and adoption.

I designed opposite interaction philosophies for Mobile and RealWear platforms. Mobile optimized for speed (30 seconds), RealWear for certainty (gated progression, no auto-commit). Accessibility constraints became better design for everyone, not workarounds.

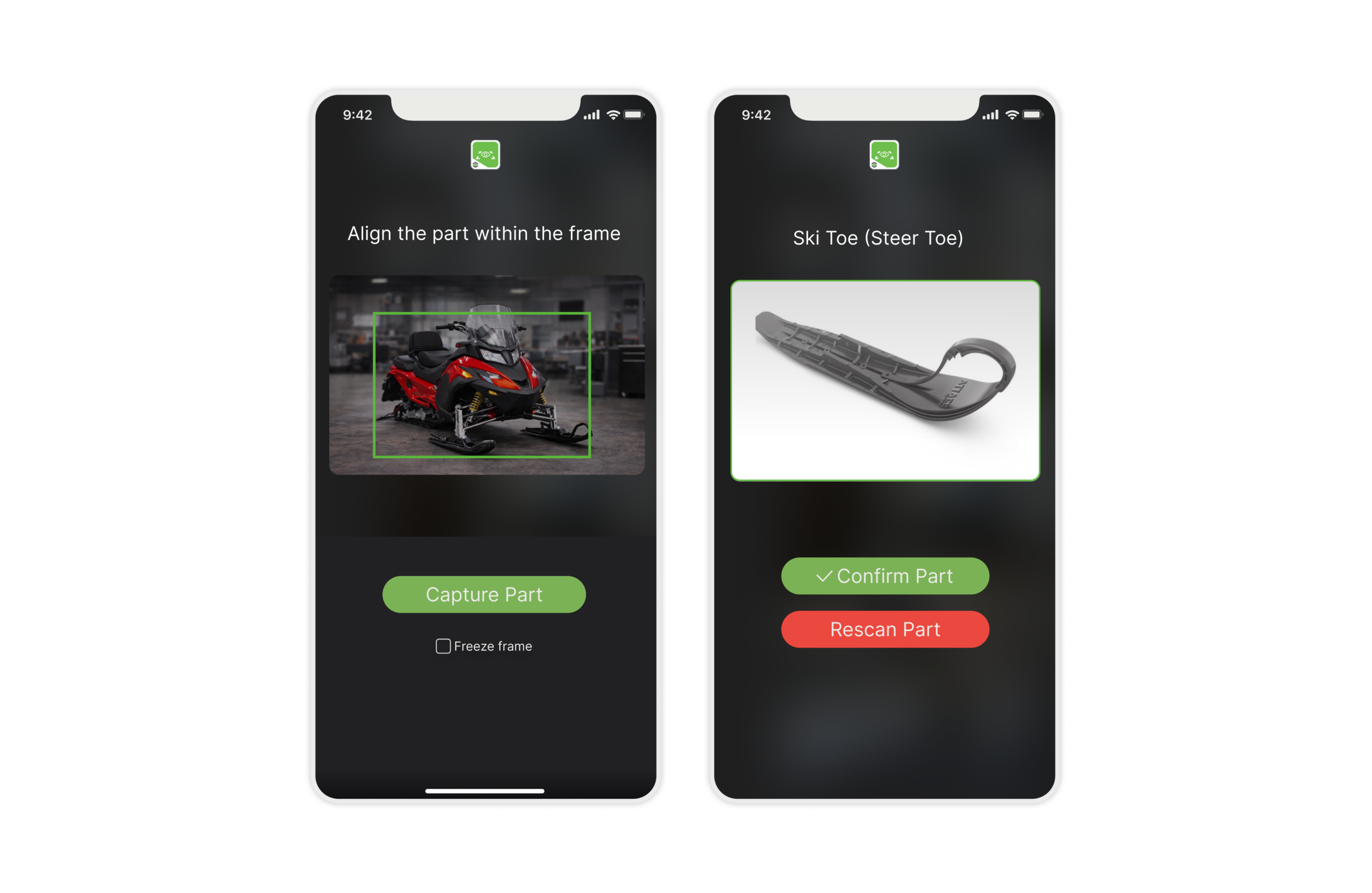

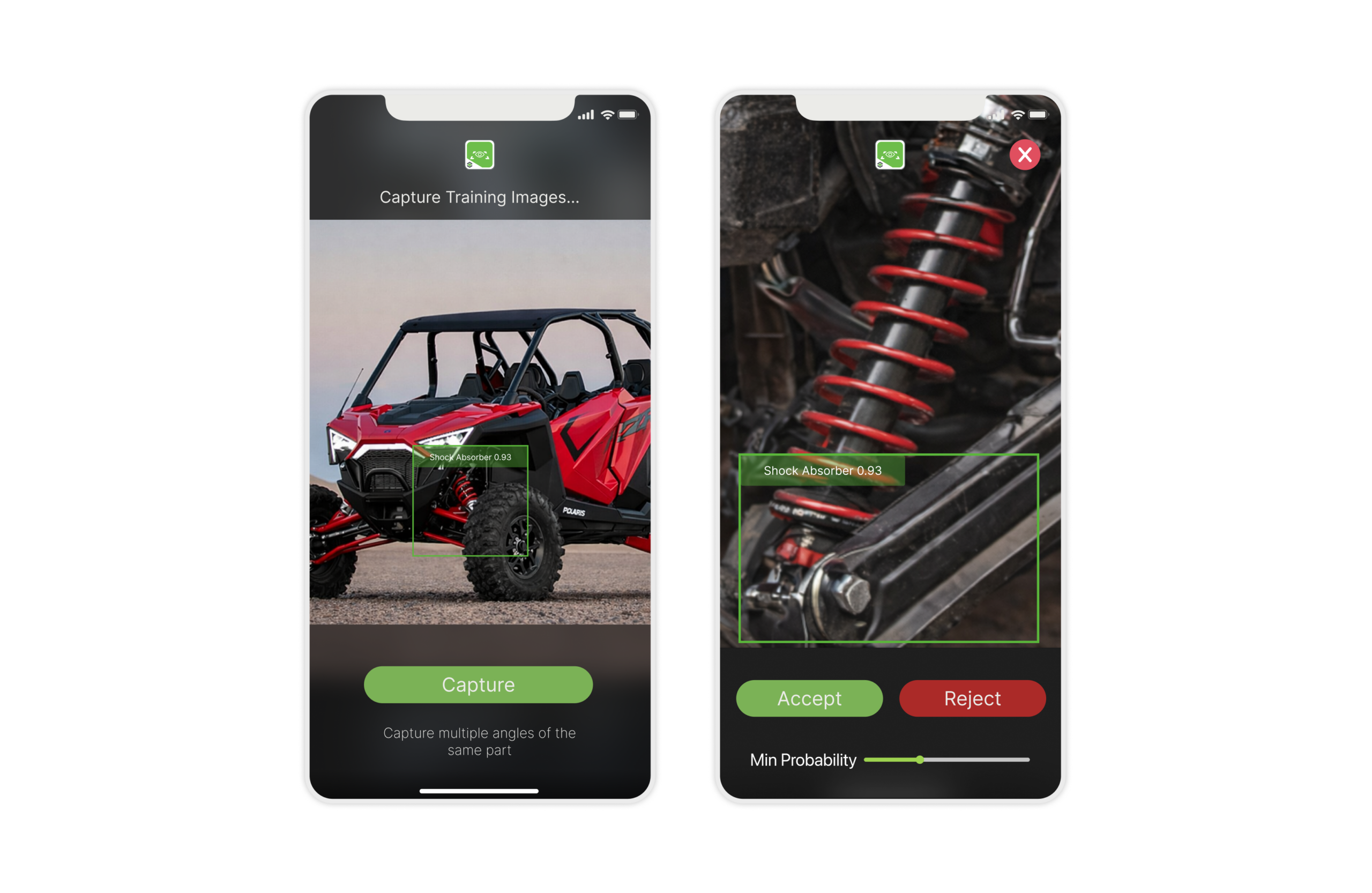

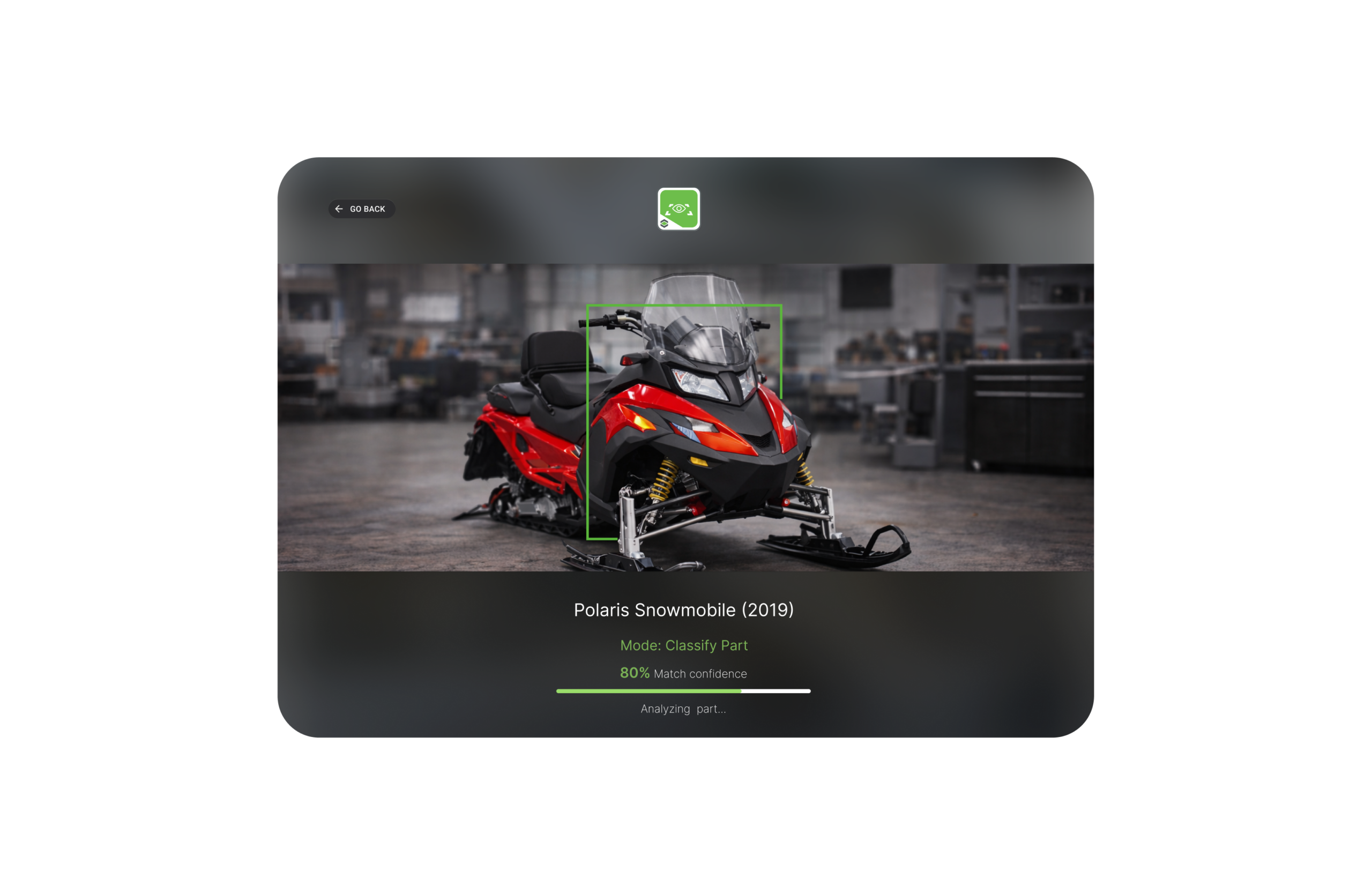

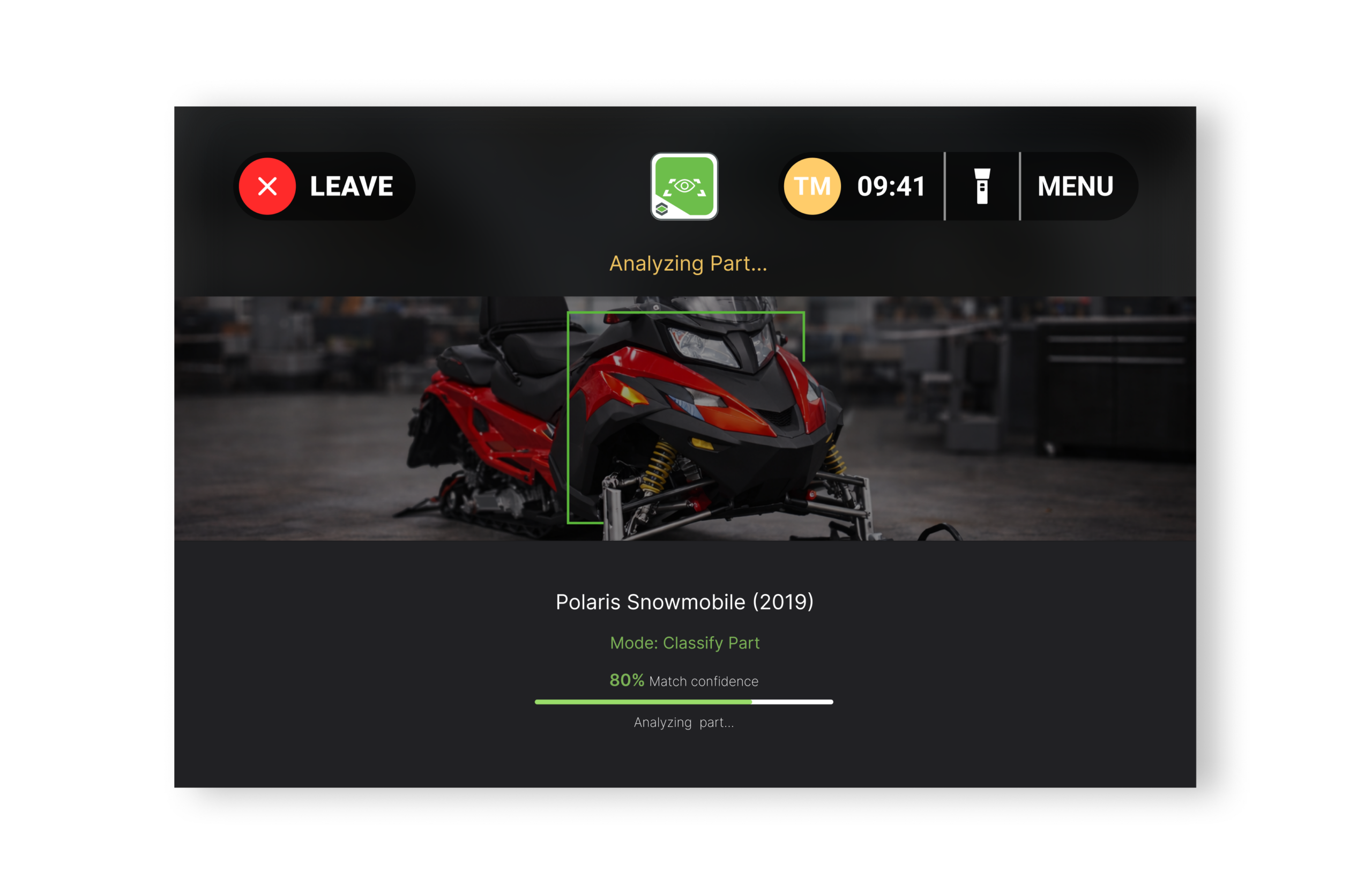

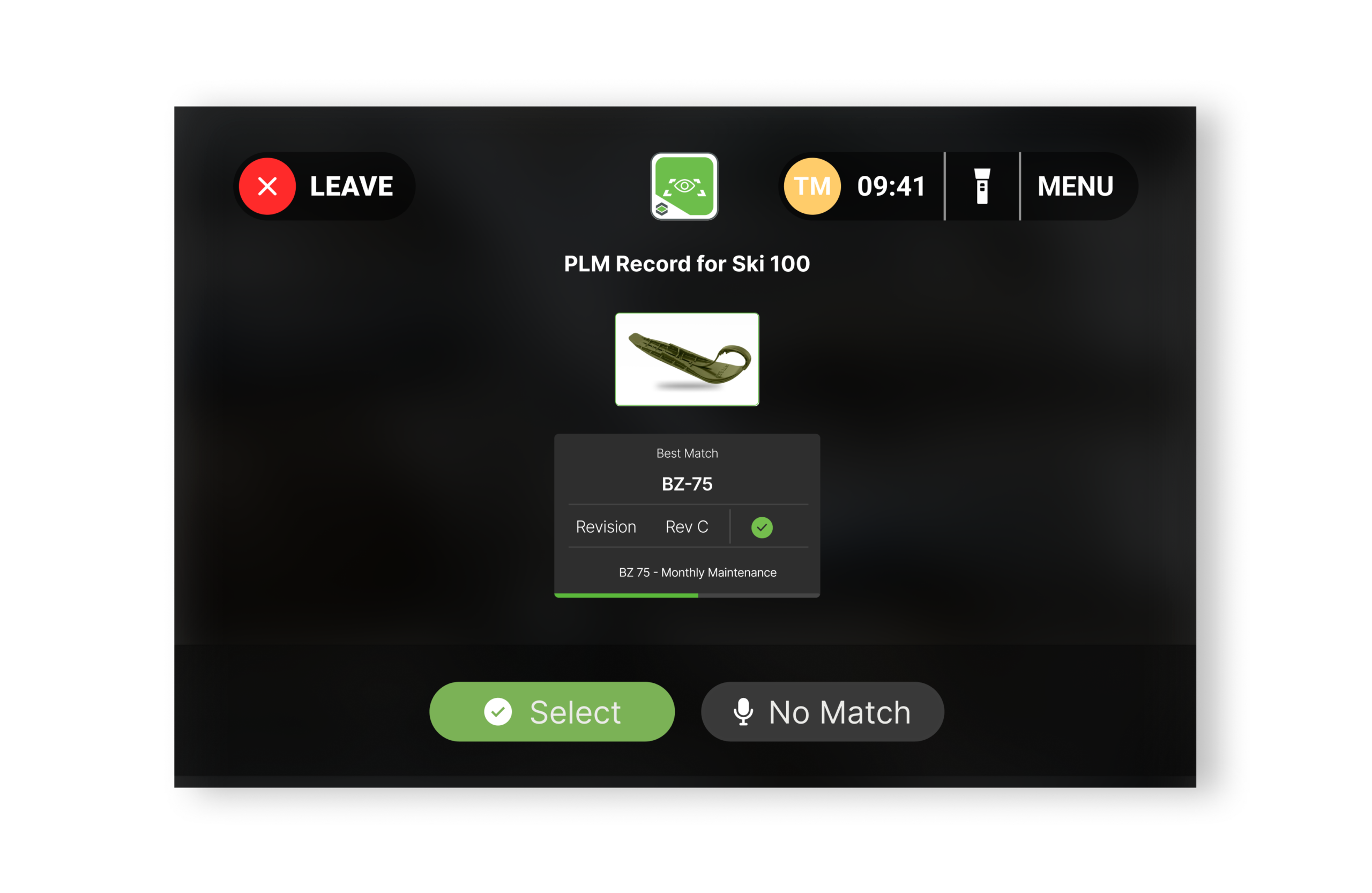

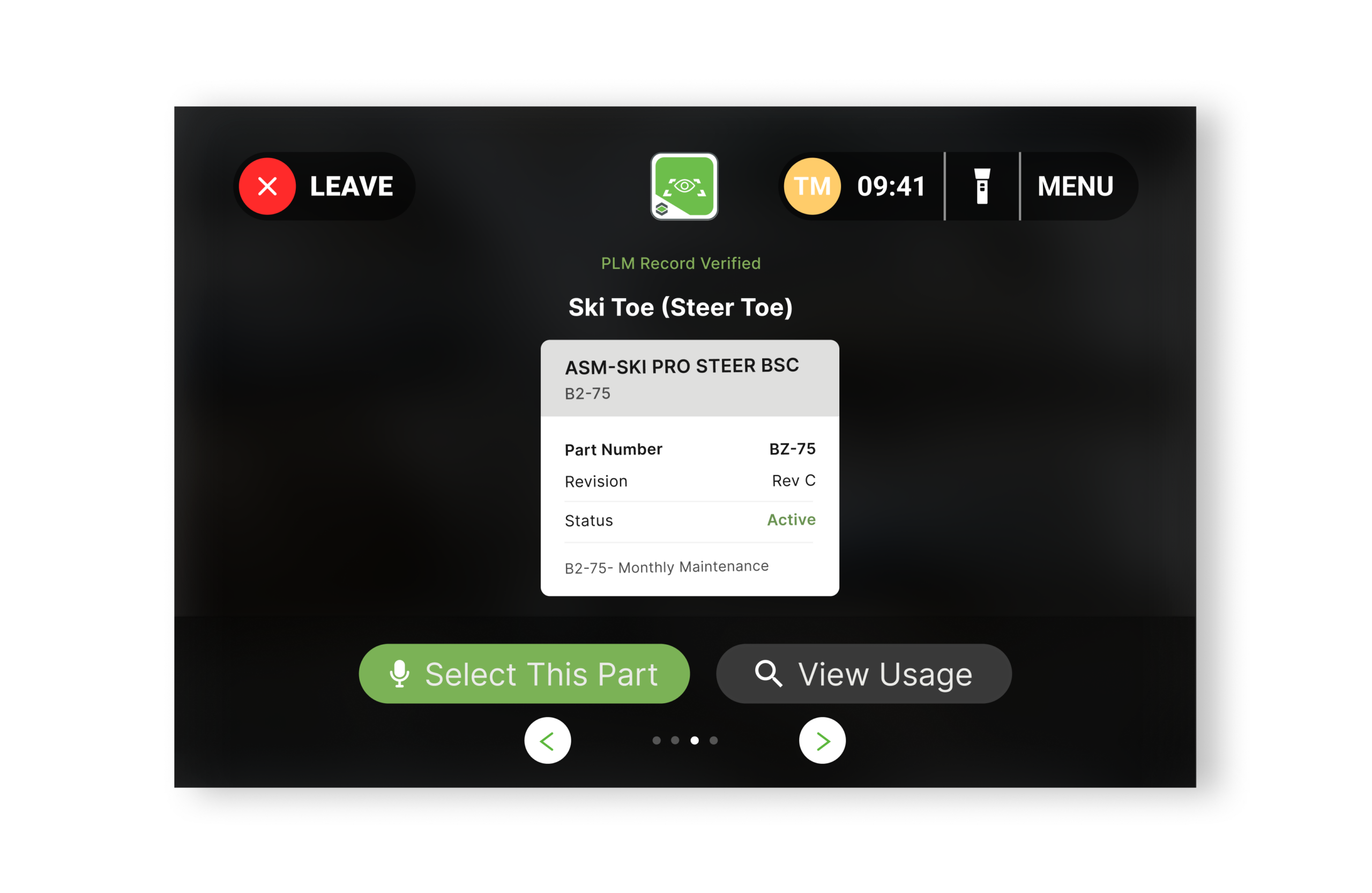

I translated AI limitations (occlusion, lighting sensitivity, latency) into user guidance. Quality Indicators trained technicians to capture optimal images. I mapped raw confidence scores (0.8932) into a 3-tier UI: Green (>85%), Yellow (60-85%), Red (<60%), turning probabilistic output into actionable decisions that built trust.

I built a Unity prototype proving the system worked in real factories, not labs. That demo unlocked $1.7M funding and a 17-person team across 6 countries. Pilots with Porsche, German automotive, and IKEA converted to contracts, generating $20M ARR. Design became a business pillar.

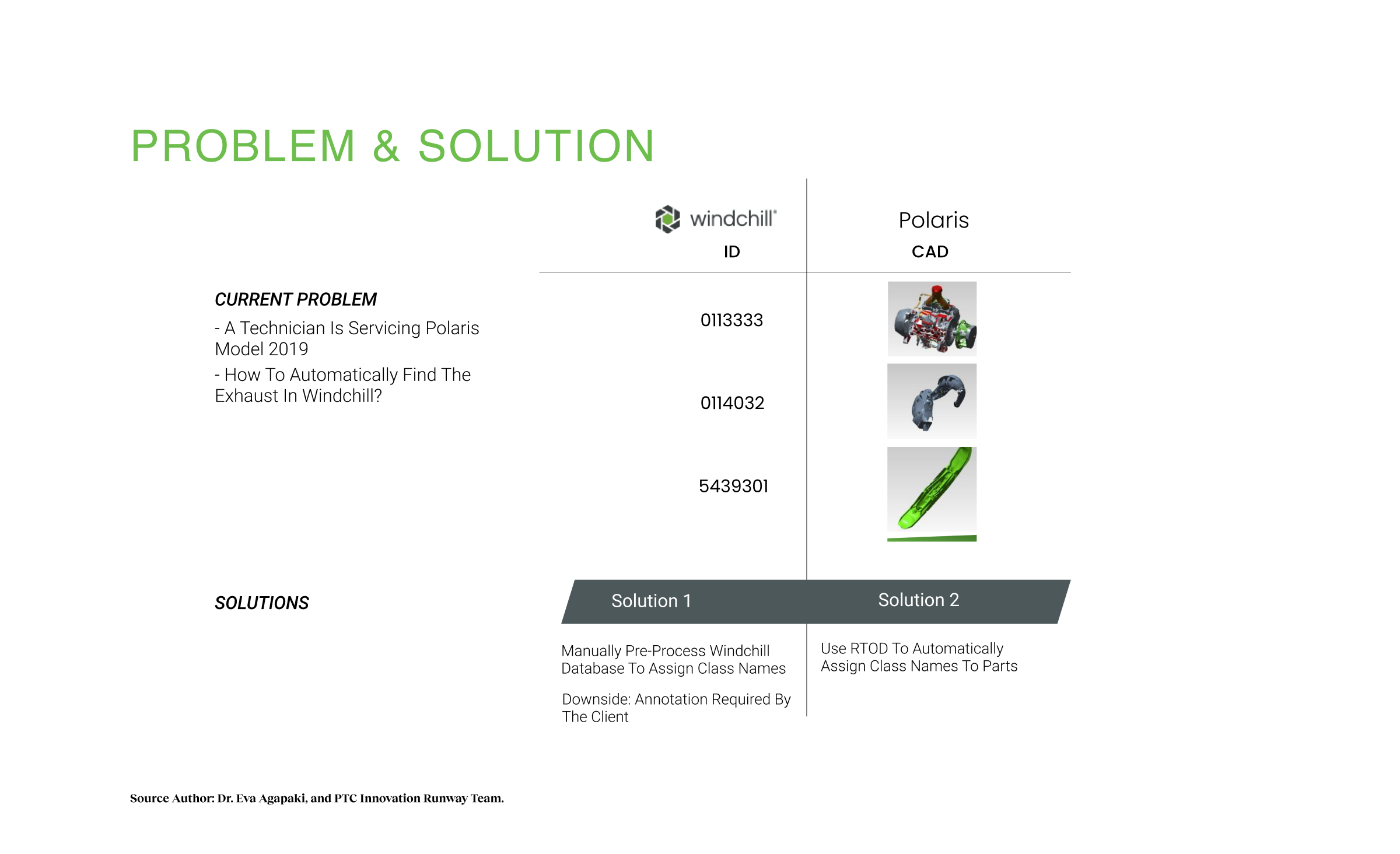

Problem Context

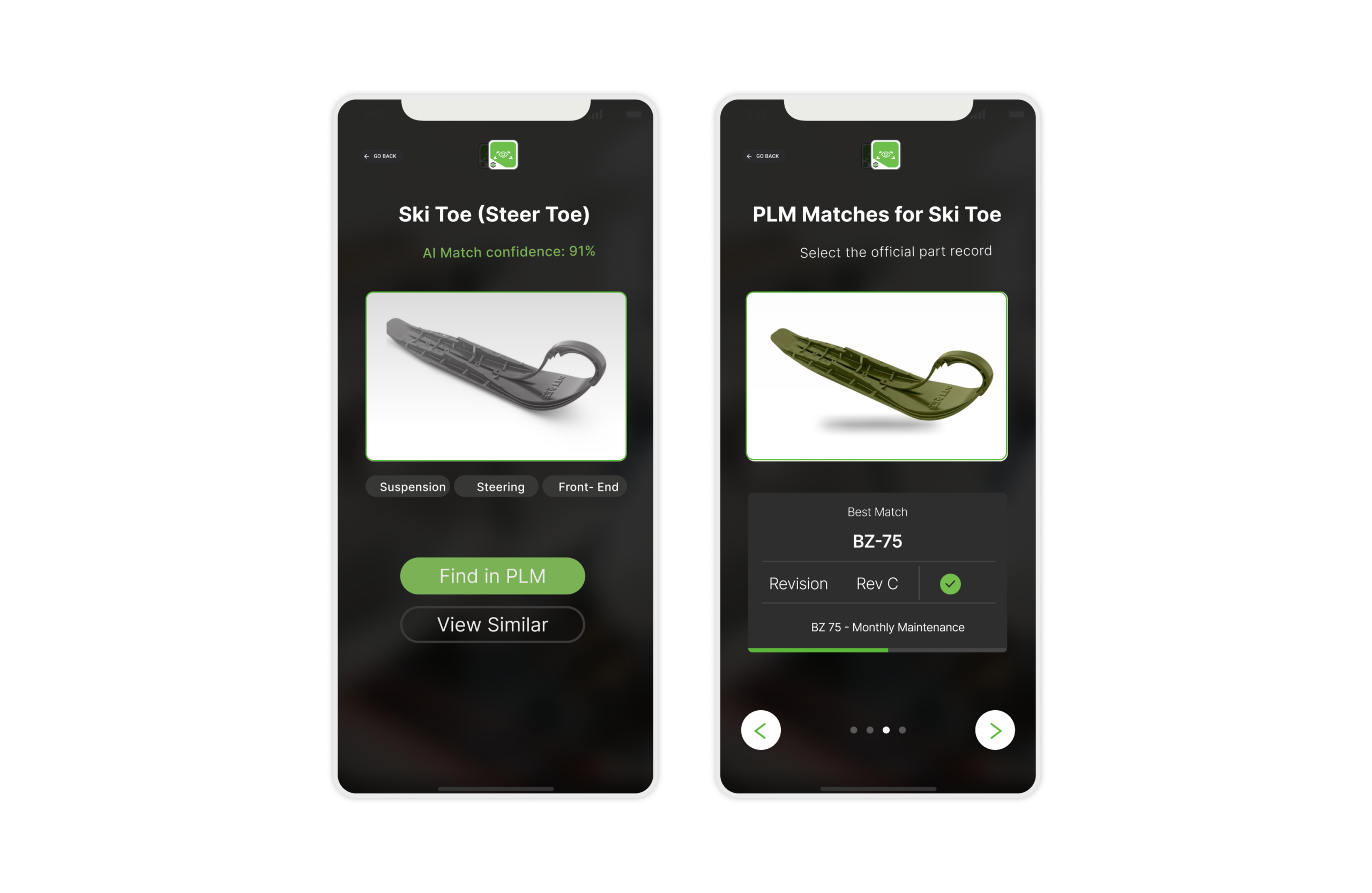

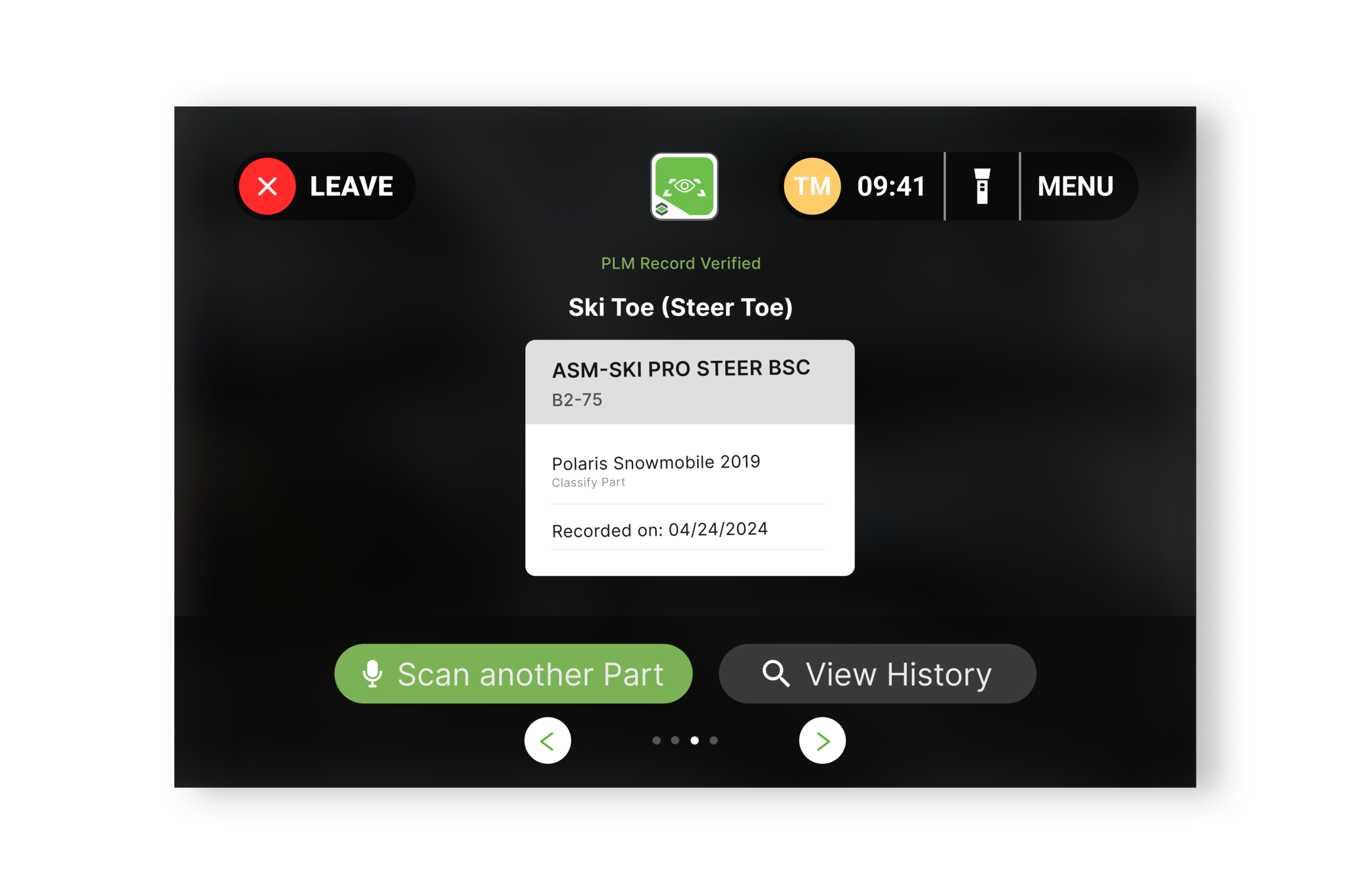

The industrial service workflow was broken. A technician servicing a 2019 Polaris 850 Patriot might spend 15-20 minutes identifying a single faulty component. The existing process relied on visual matching against outdated PDF catalogs, resulting in a 50% error rate. Parts like specific bolts or water pump assemblies look identical but have vastly different specifications. Order the wrong variant, and the factory line stops. That’s millions in downtime.

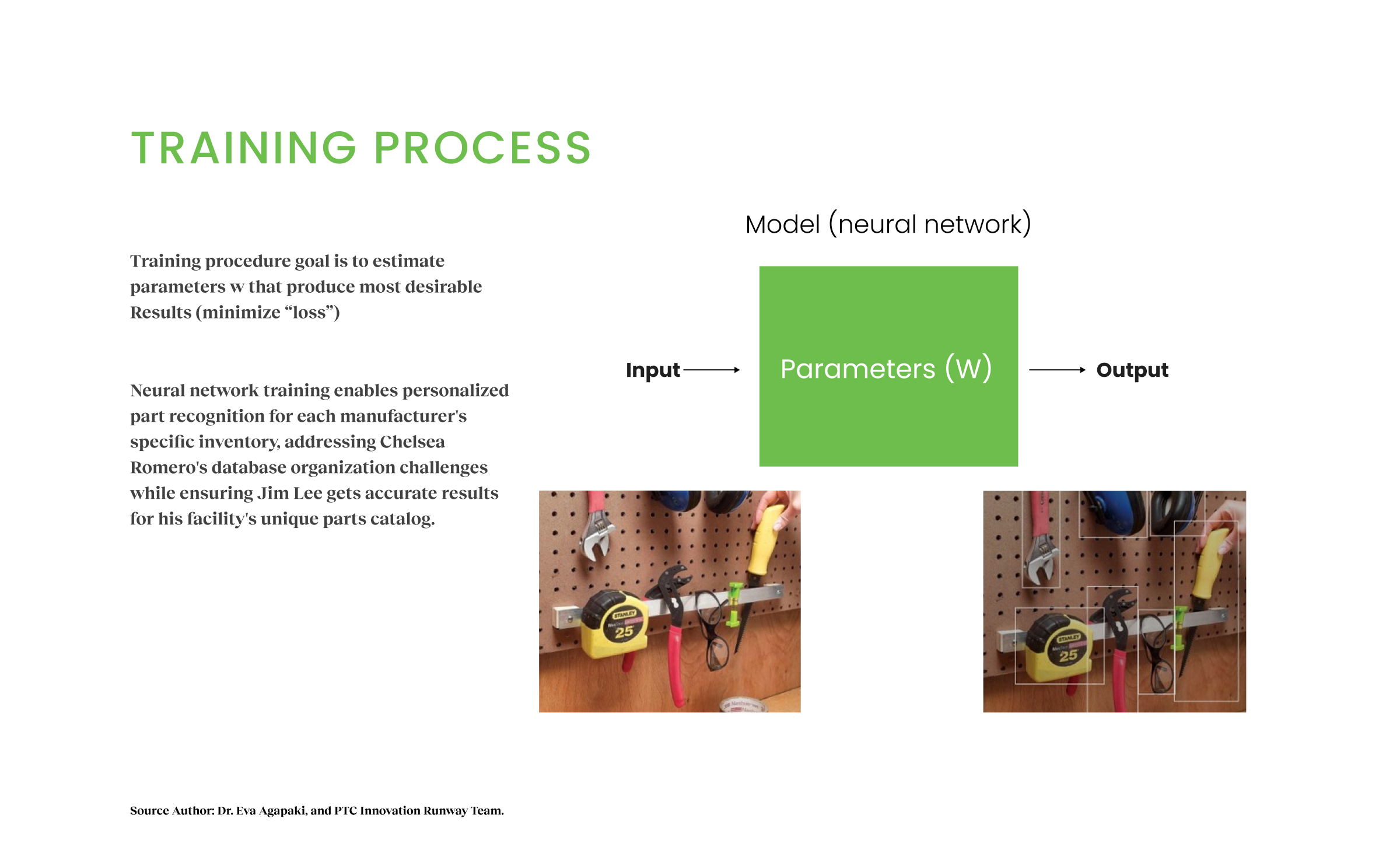

Systemically, the barrier was threefold. First, data availability: training AI requires thousands of photos per part. For clients with 20,000+ parts, gathering this was operationally impossible. PTC’s research team had solved this with synthetic CAD training, but the AI sat in labs for six years, not shipping. Second, the productization gap: raw AI output was probabilistic (bounding boxes, confidence scores), not actionable. Third, context complexity: technicians work in low-light, dirty, safety-critical environments where mistakes have consequences. The design intervention needed to bridge the fidelity gap between pristine CAD models and gritty factory reality, while proving to leadership that research could become a fundable product.

My Approach

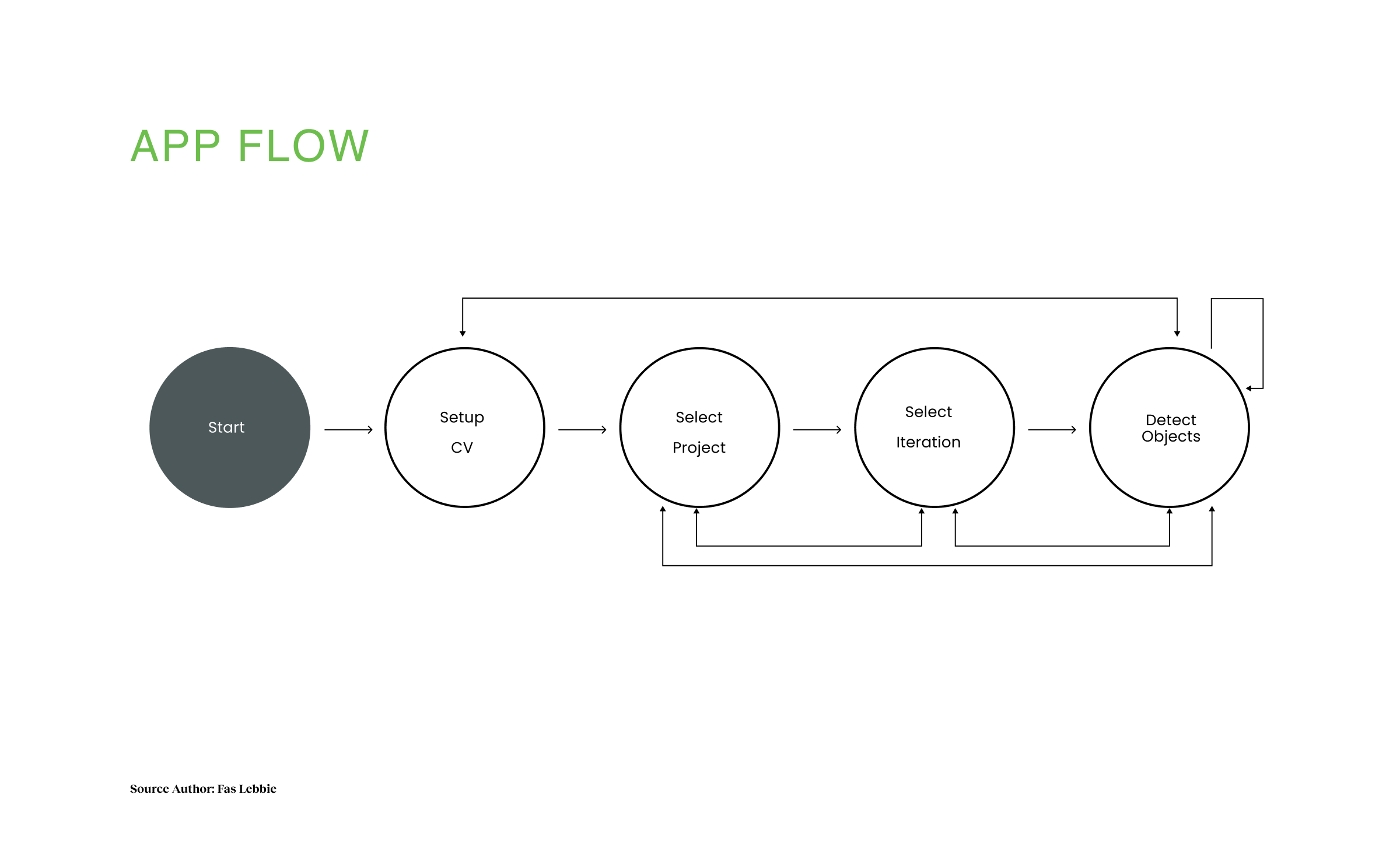

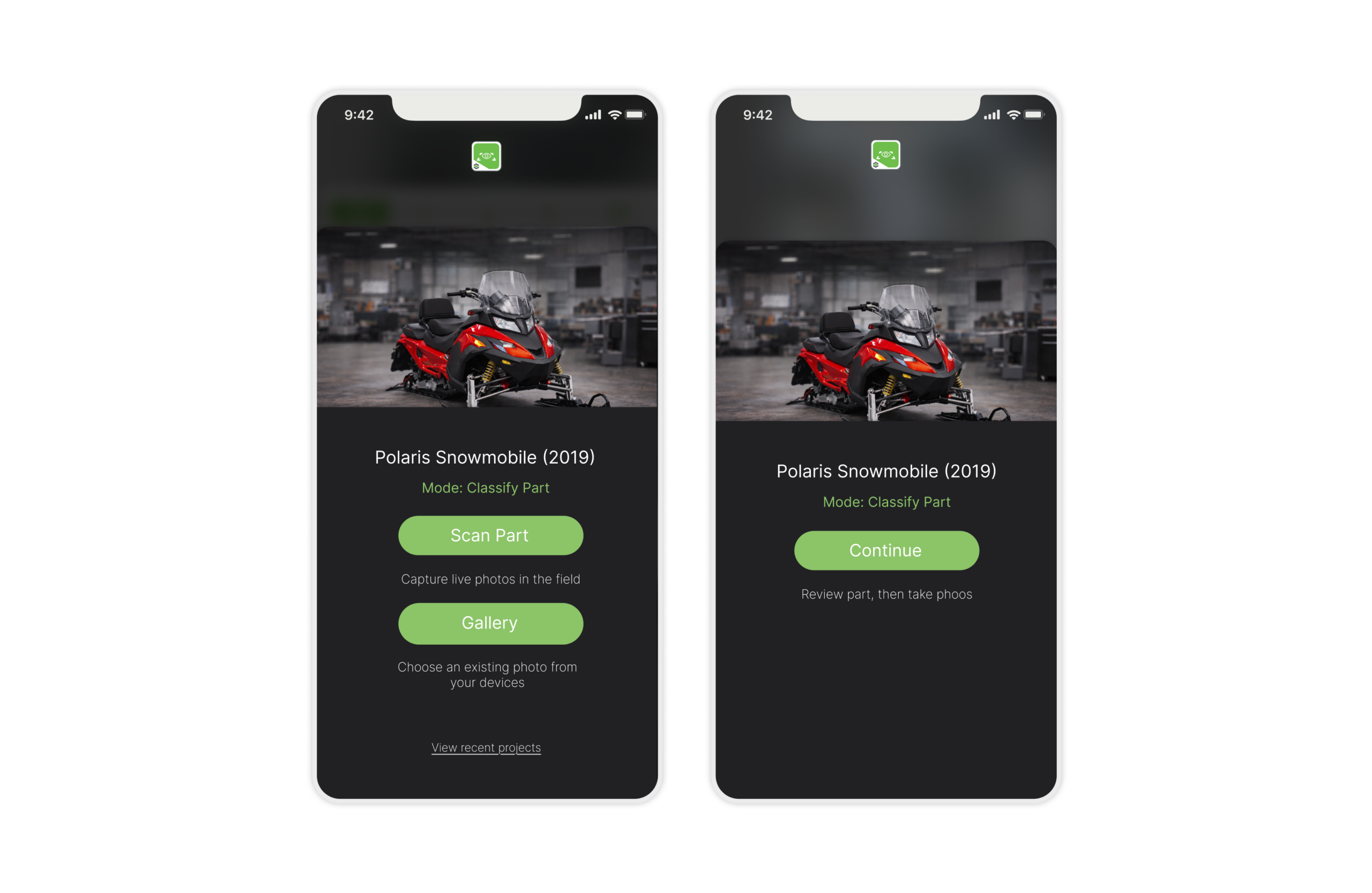

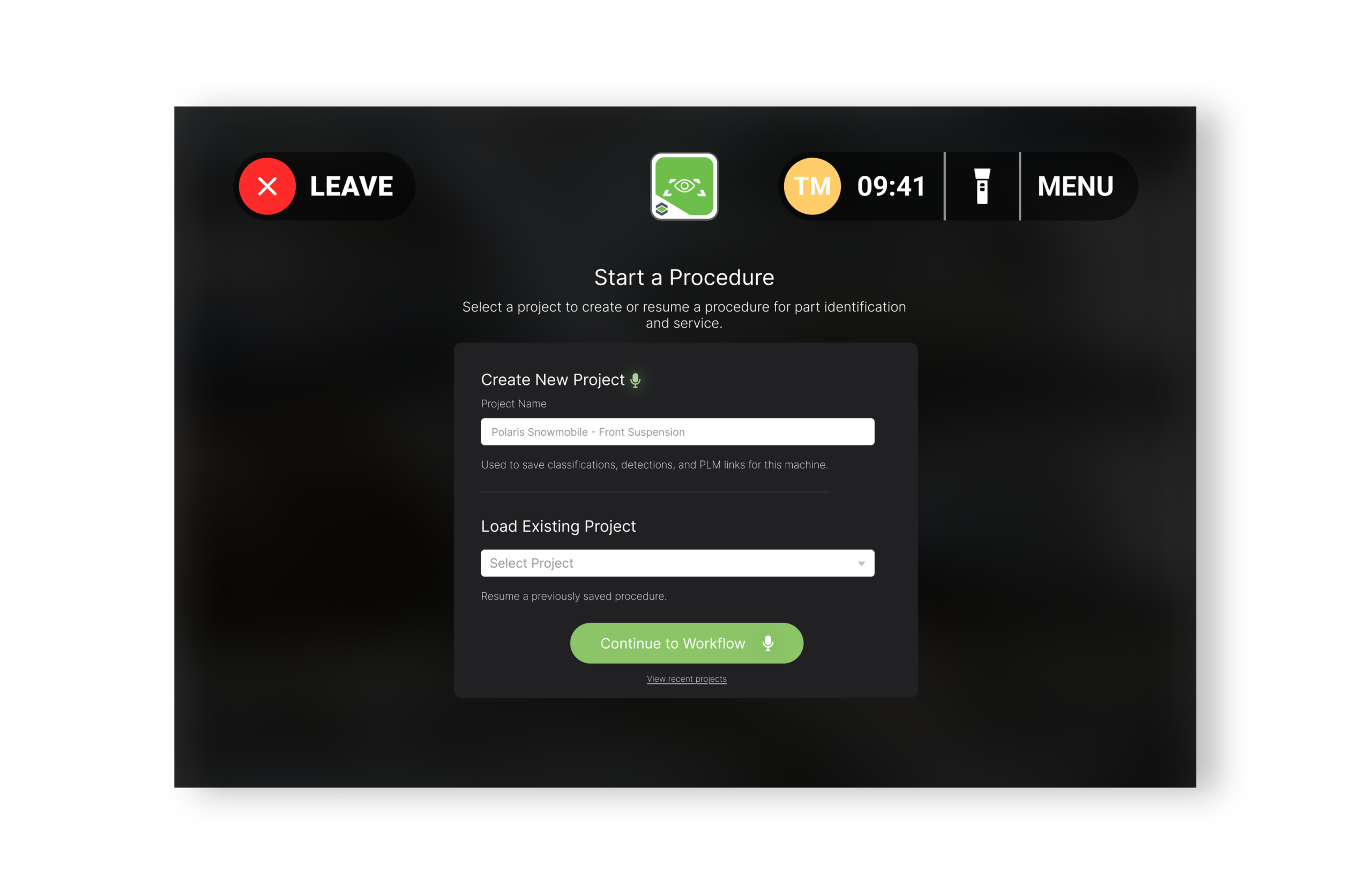

I began this project by crafting a context-first strategy to bridge probabilistic AI with human action, designing adaptive, multimodal interfaces. Precision touch for mobile, voice command for RealWear. I validated flows through rapid Unity prototyping, moving beyond Figma to test how latency, lighting, and occlusion actually felt on the factory floor, ensuring a performant, field-ready experience.

Design Process

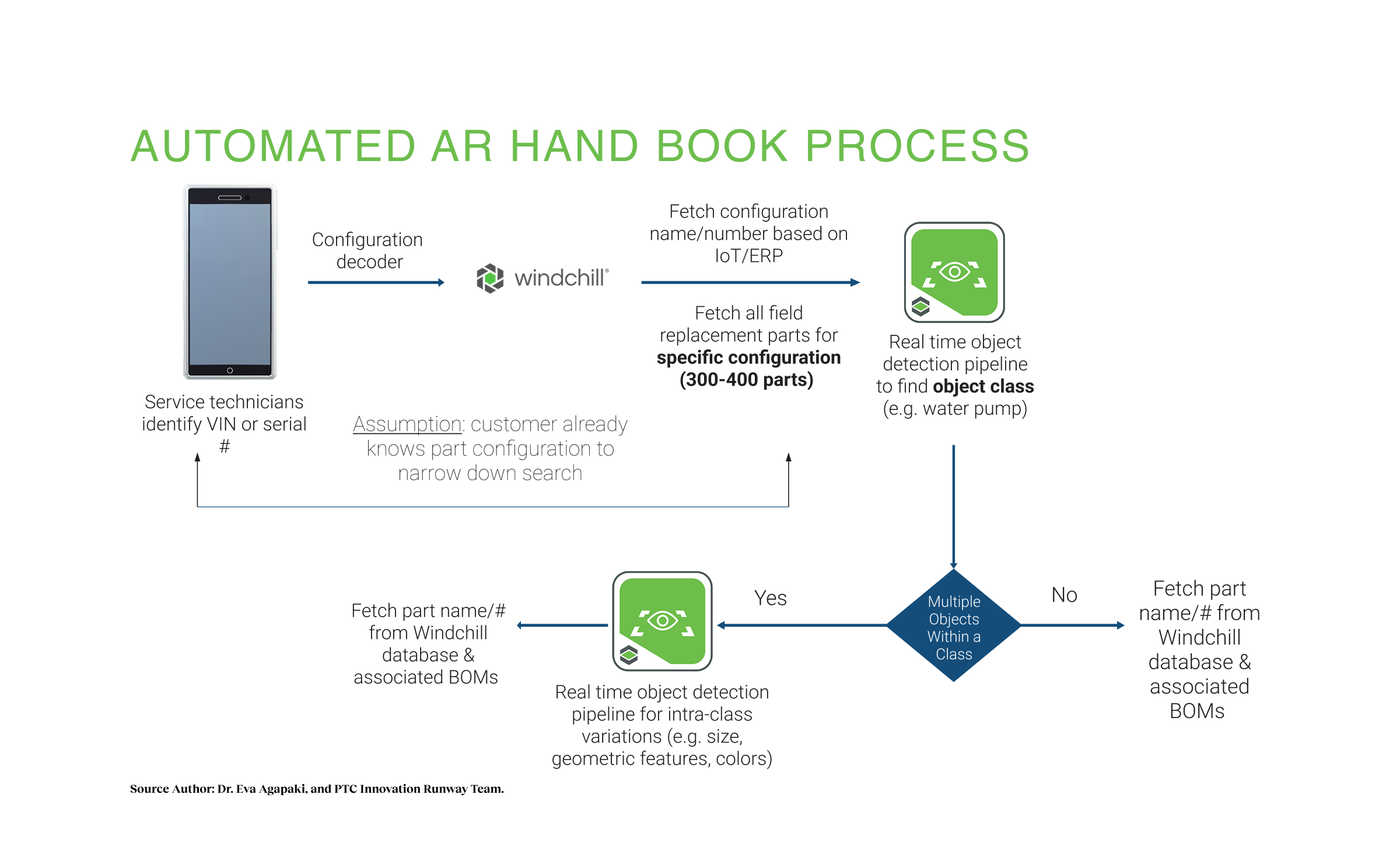

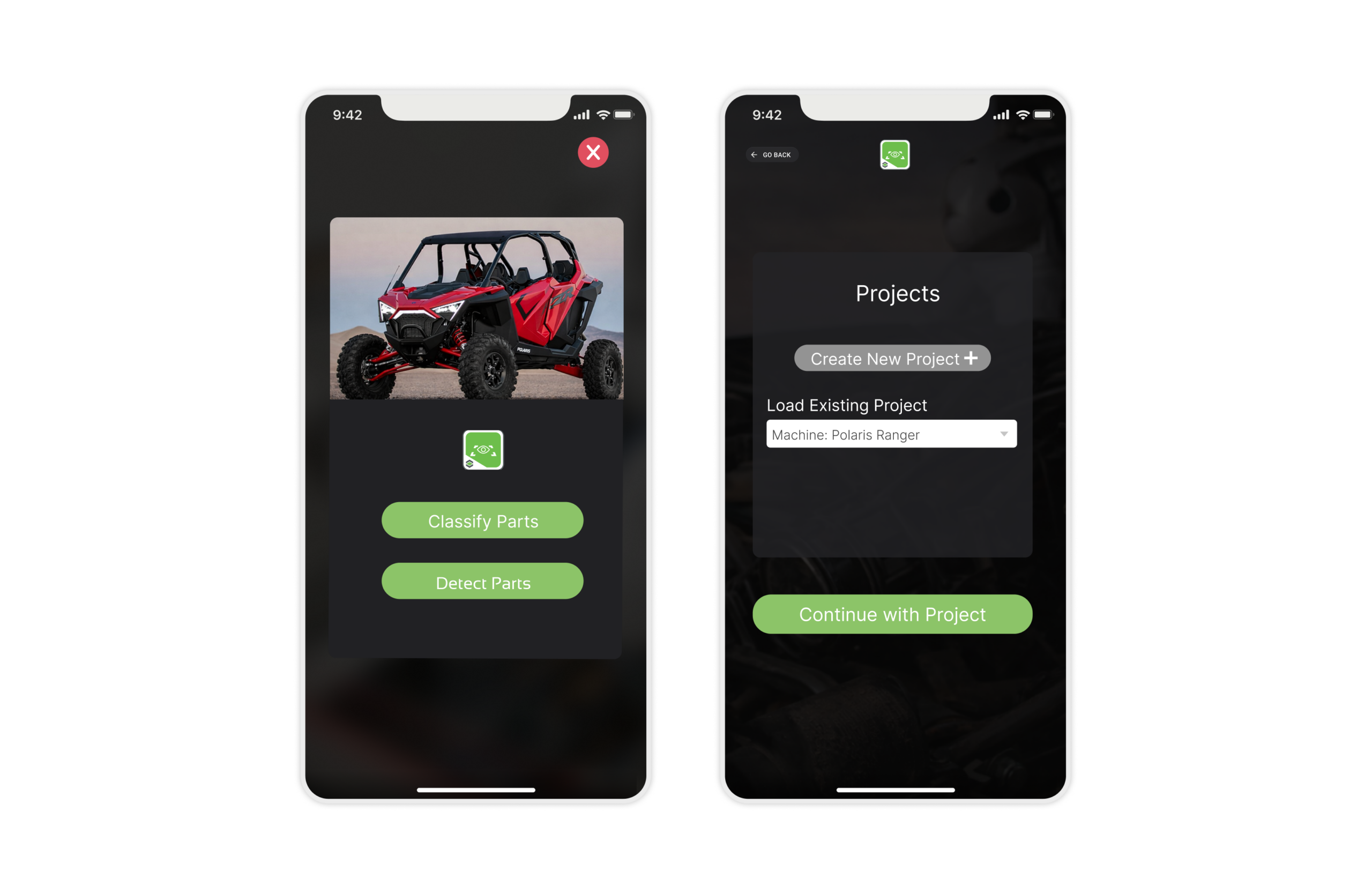

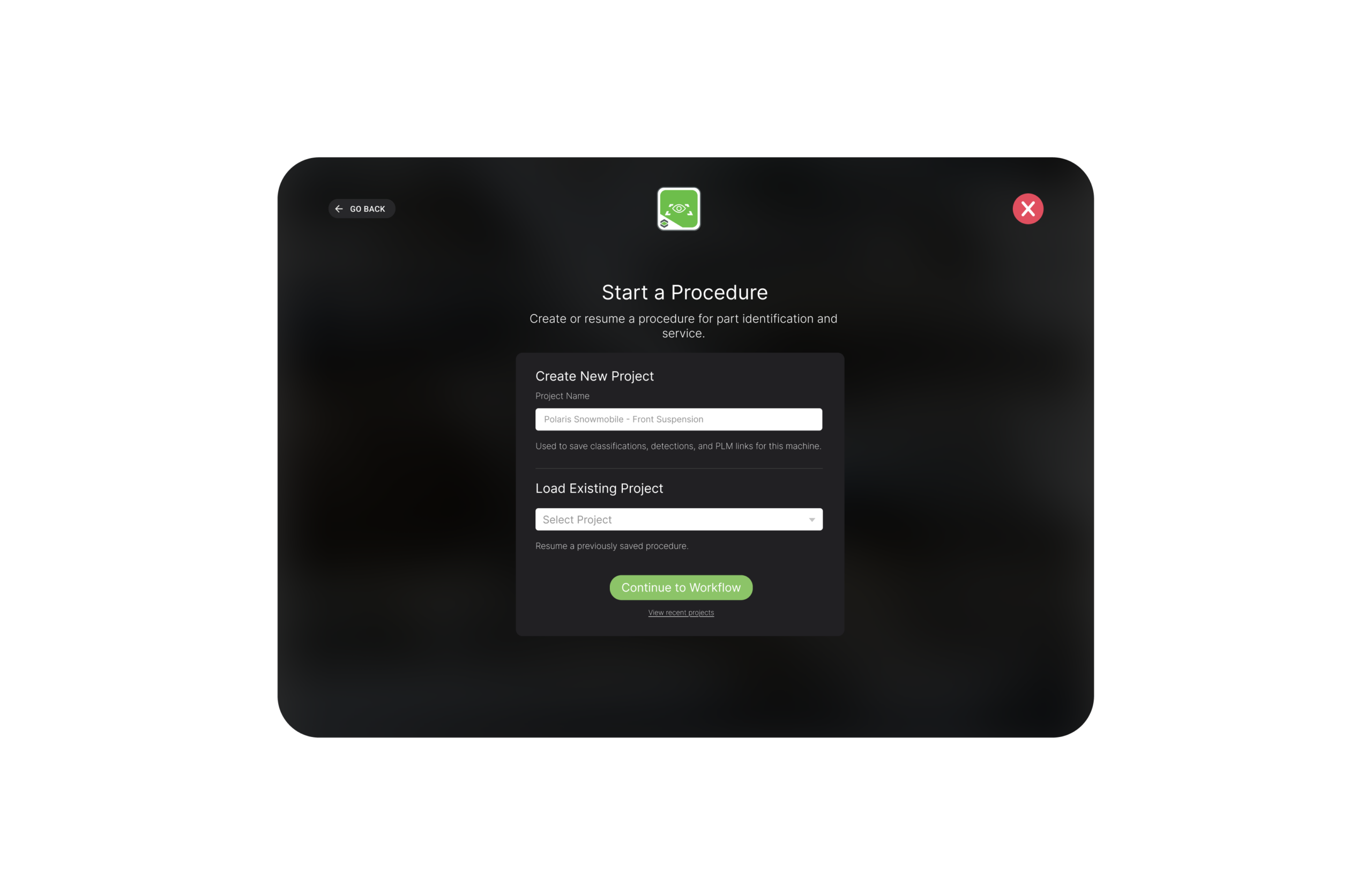

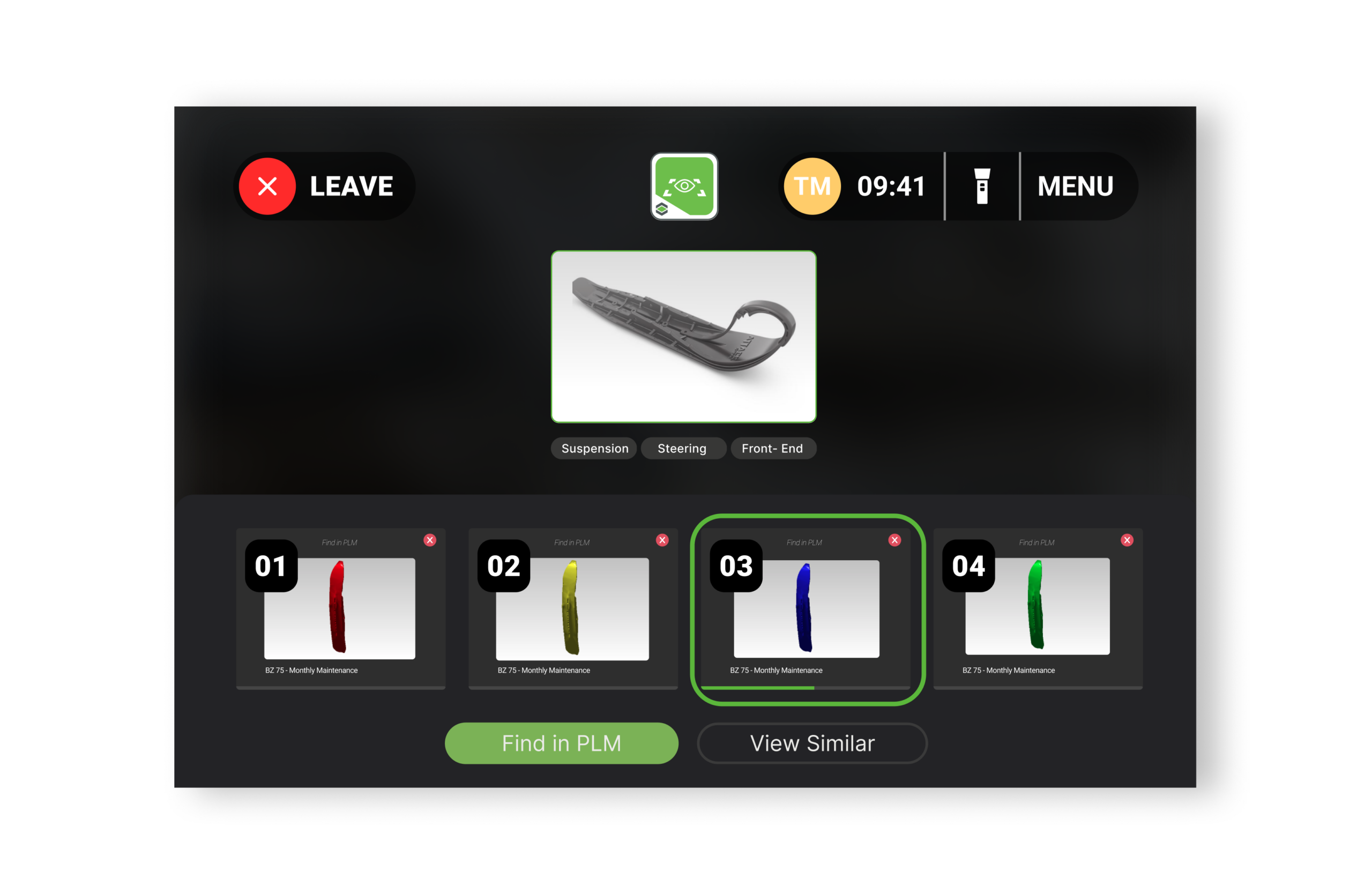

When I joined, the team had a command-line demo but no app. Research into competitors like Slyce showed they focused on retail, identifying shoes from global databases. But manufacturing is different. A technician doesn’t need to search the entire world of parts; they only need parts inside that specific machine. Our feasibility analysis revealed AI cannot reliably distinguish millions of parts globally on mobile devices. We established the “20-200 Part Rule”: the UX filters datasets down to <200 parts per session.

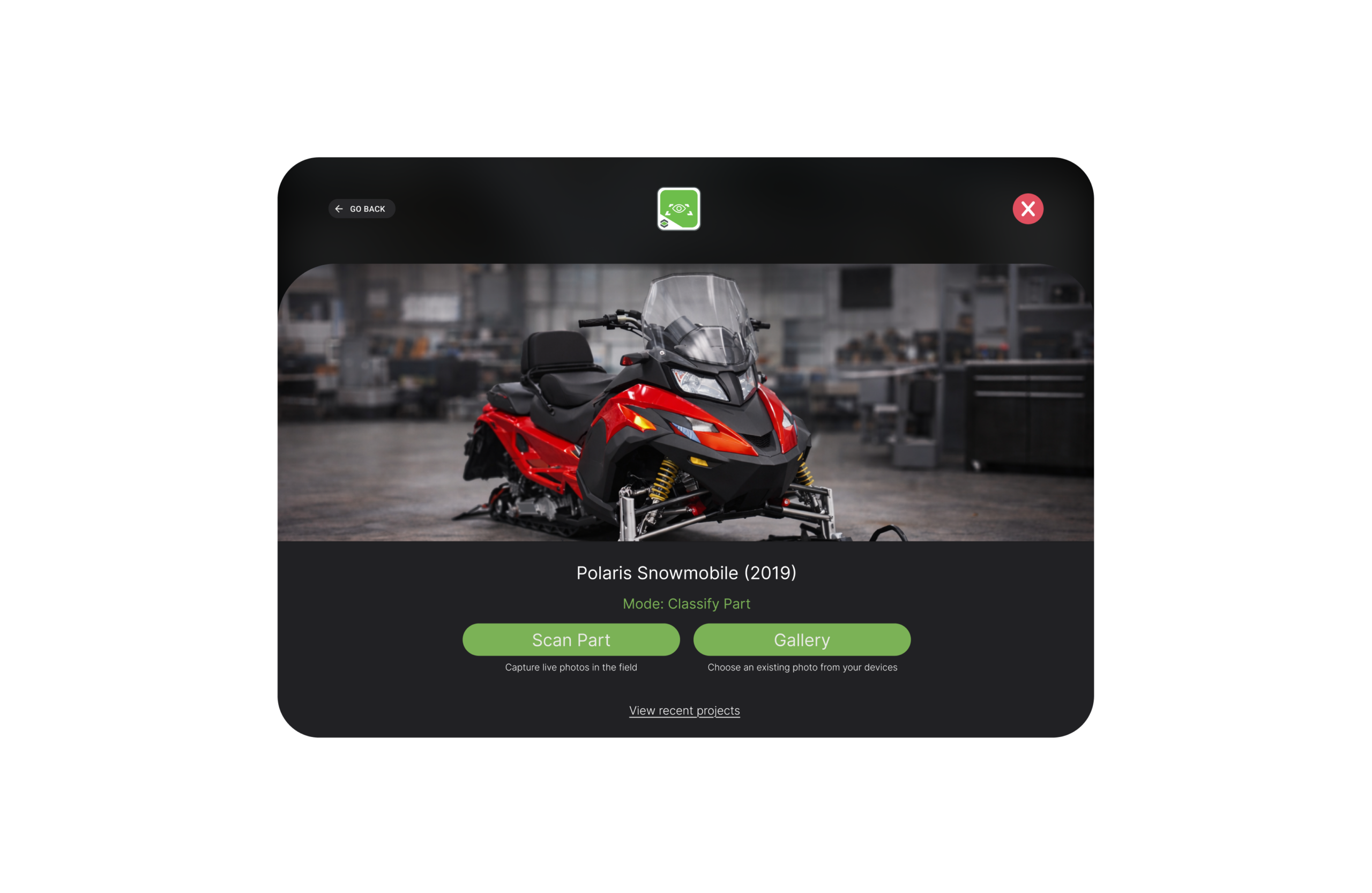

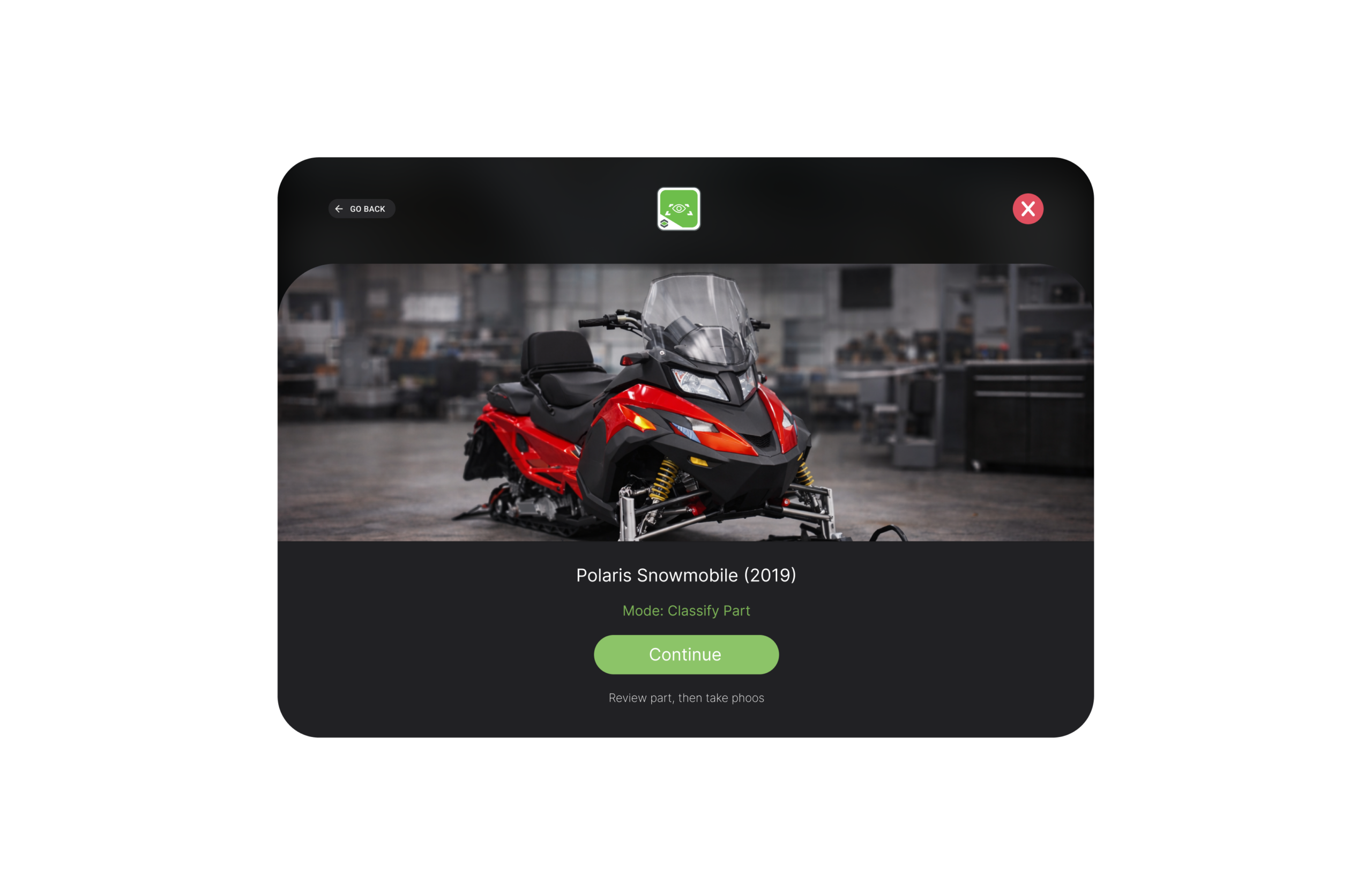

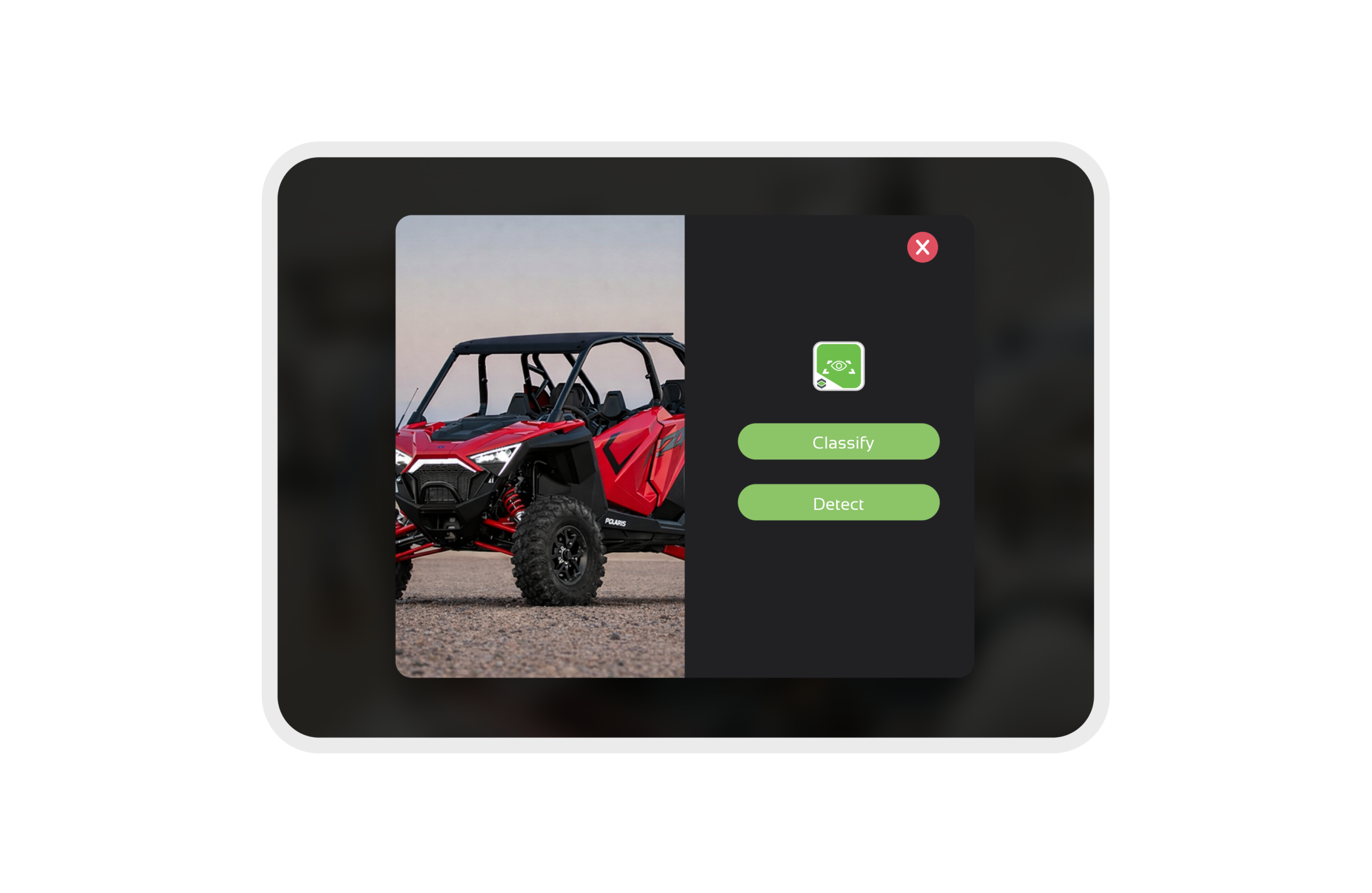

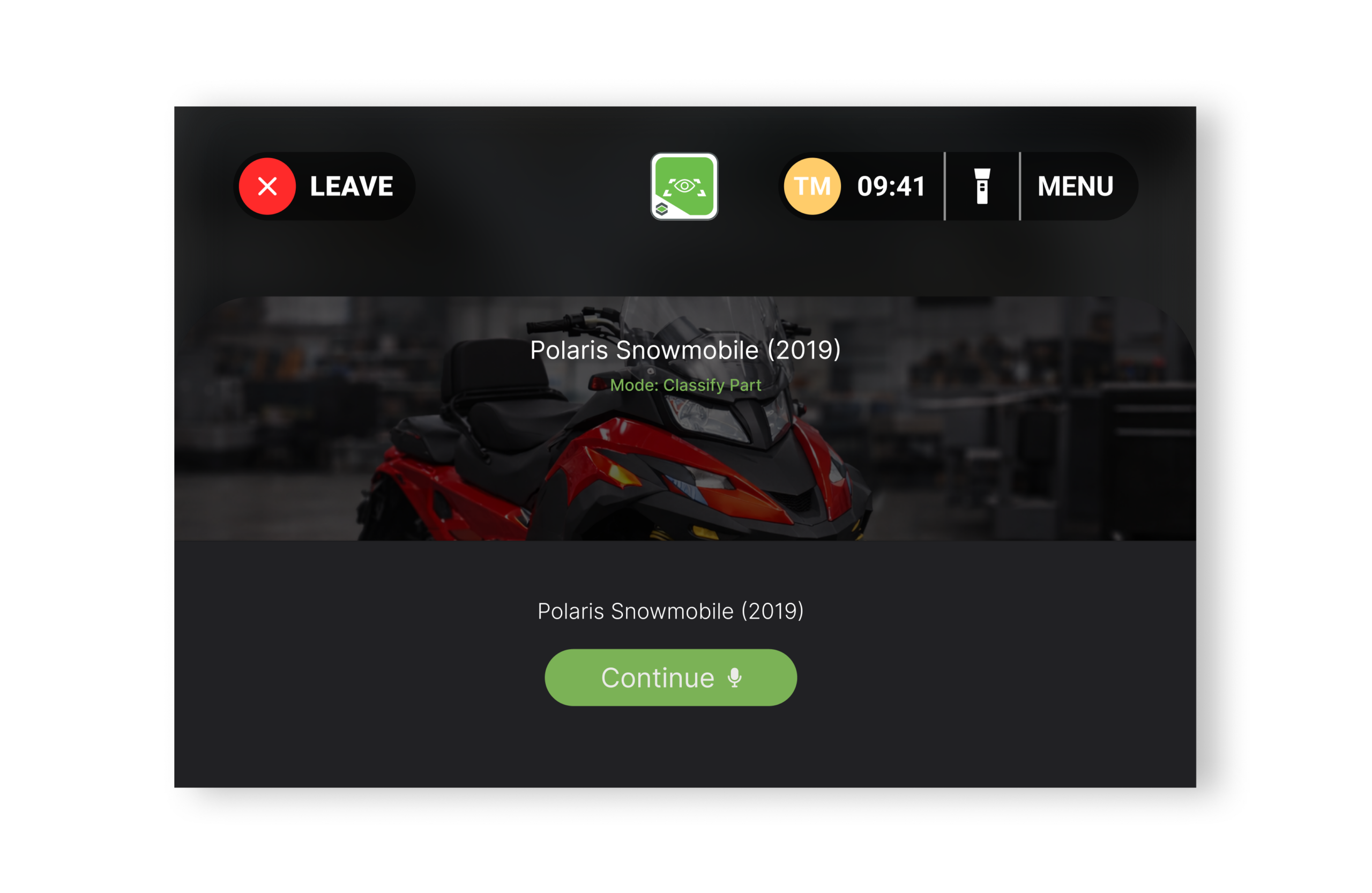

This insight drove our Context-First Strategy:

- Context First (The Filter): User identifies the vehicle model (e.g., Polaris 2019), allowing AI to ignore millions of irrelevant parts.

- Visual Verification (The Scan): Only then does the user scan the specific part within that smaller subset.

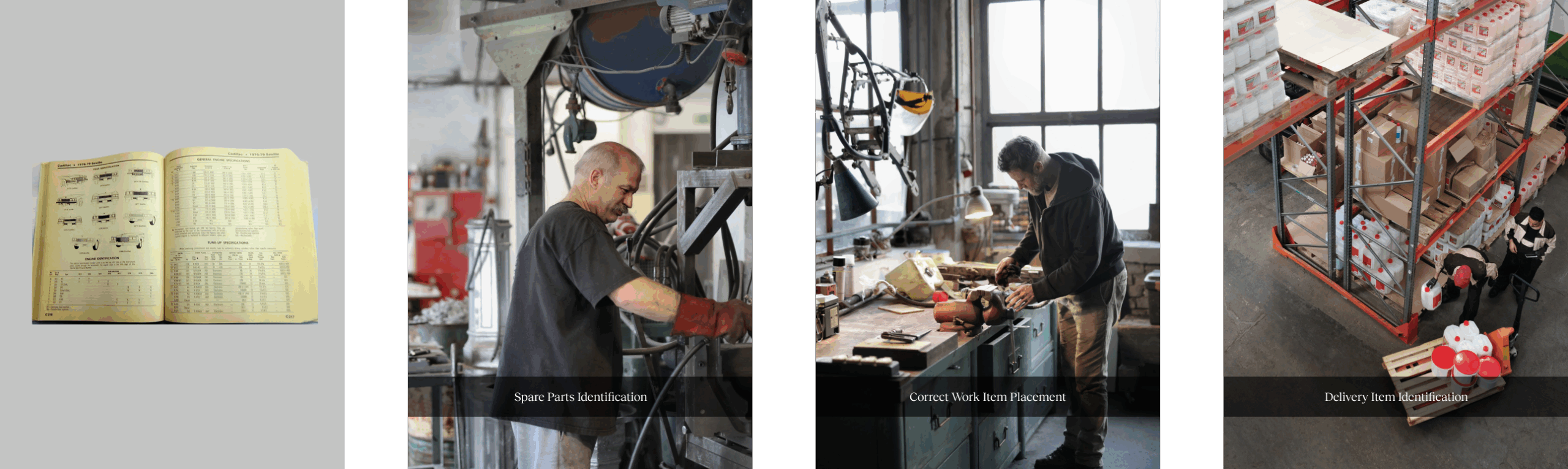

Through field research across 10+ manufacturing facilities, I identified four personas with distinct jobs to be done, but only three became primary users.

Jim Lee (Service Technician) needed to identify single parts fast under pressure. He noted, “Many times, I make mistakes, and the process takes time.” This inefficiency rippled to Anna Rodriguez (Factory Operator), who confirmed manual errors increase operational costs: “I end up ordering obsolete parts that I don’t need.”

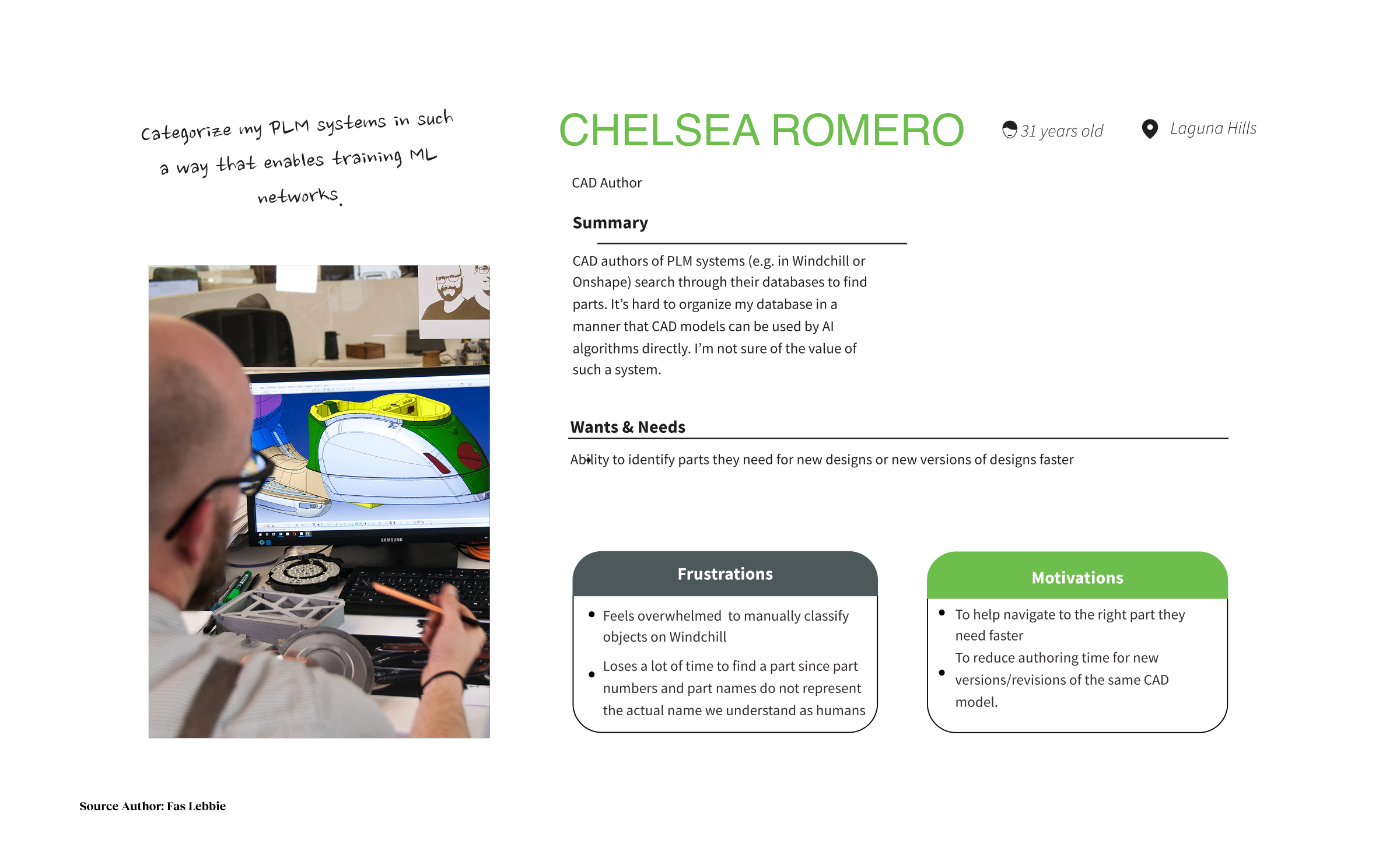

Upstream, James Romero (CAD Author) revealed the root cause: “It’s hard to organize my database so that CAD models can be used by AI algorithms directly.” This bottleneck limited Hannah James (AR Service Provider), the intermediary between manufacturers and software: “there is a limited set of 3D models users can automatically place in the physical world.”

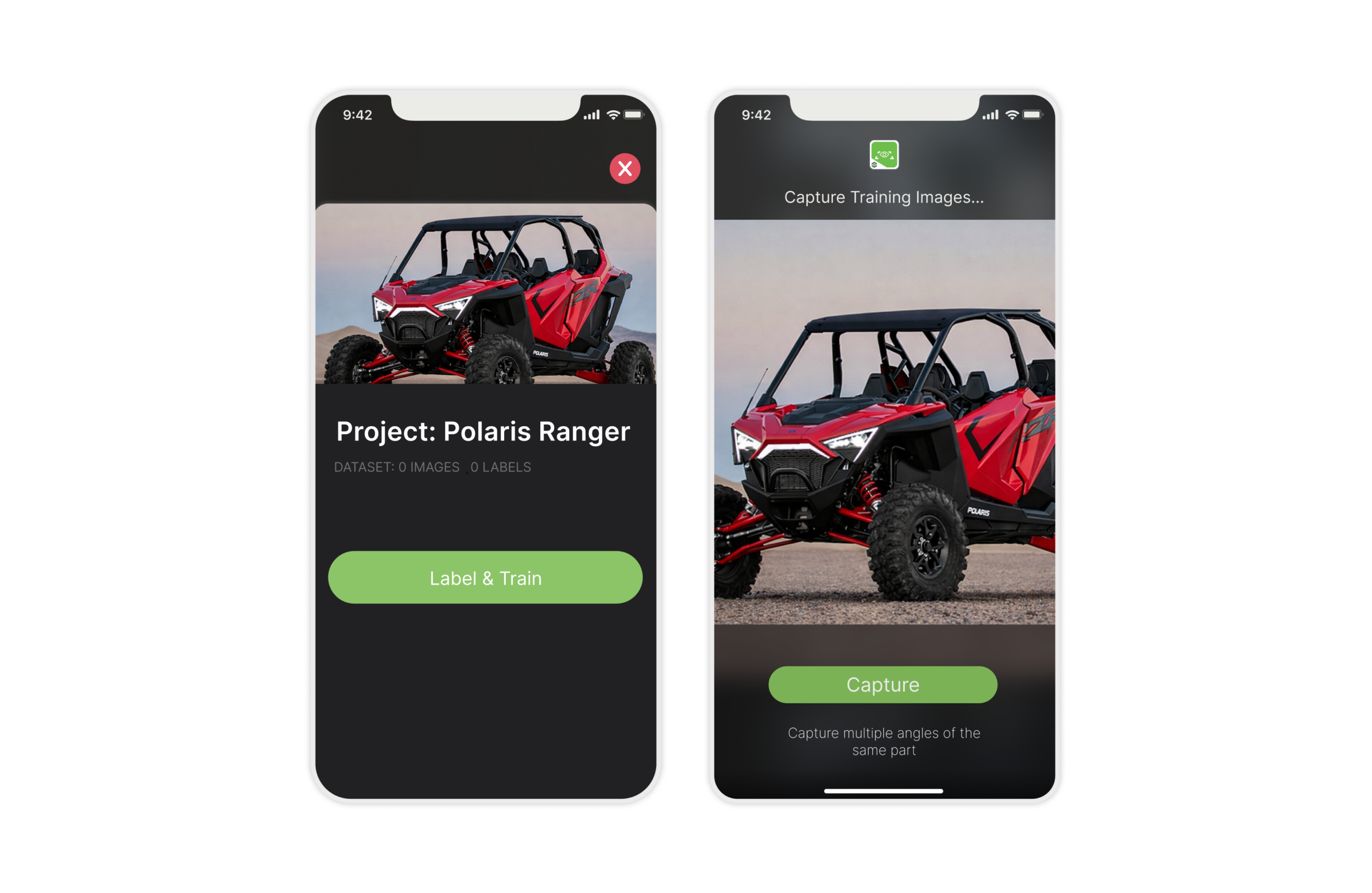

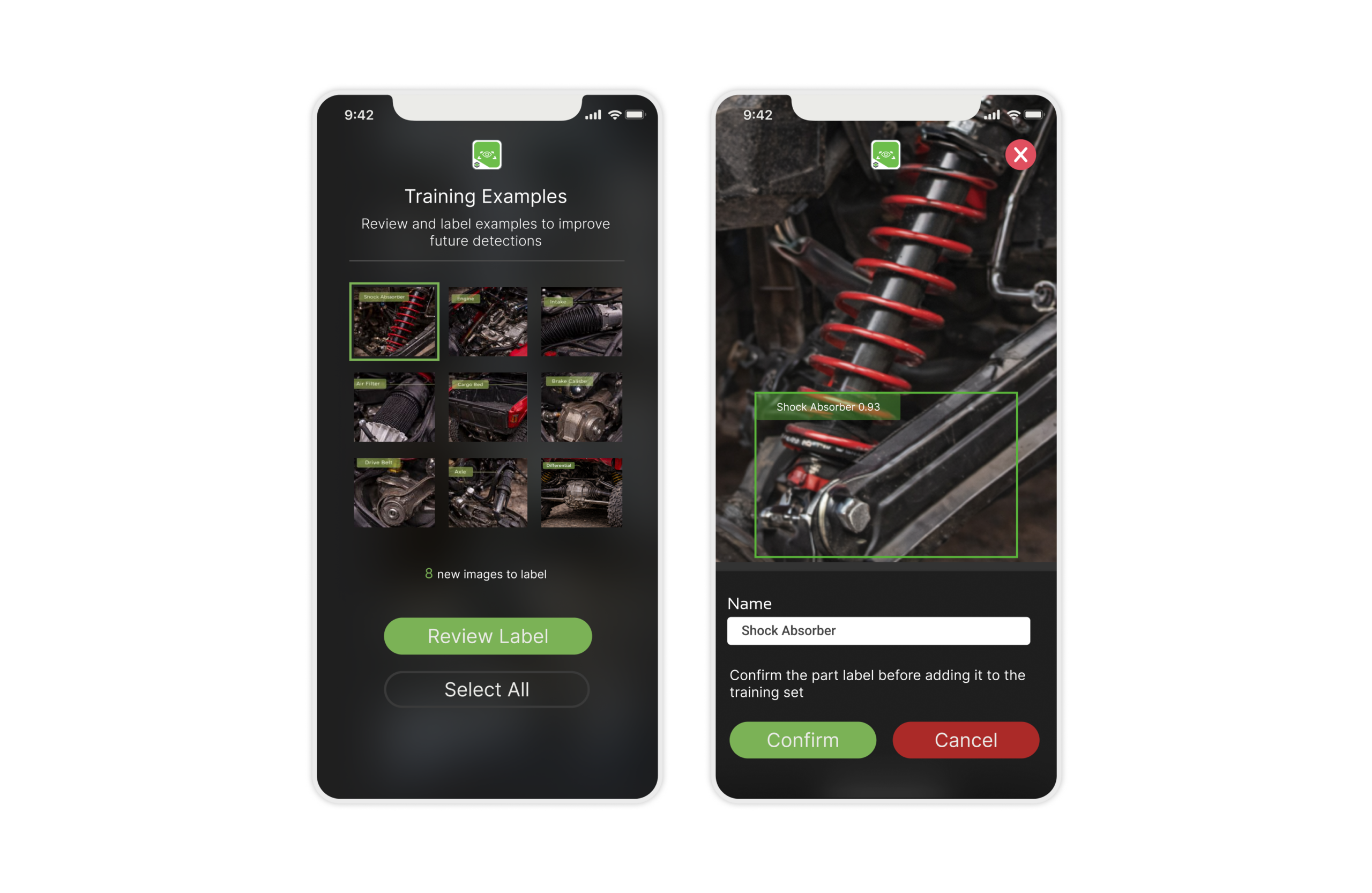

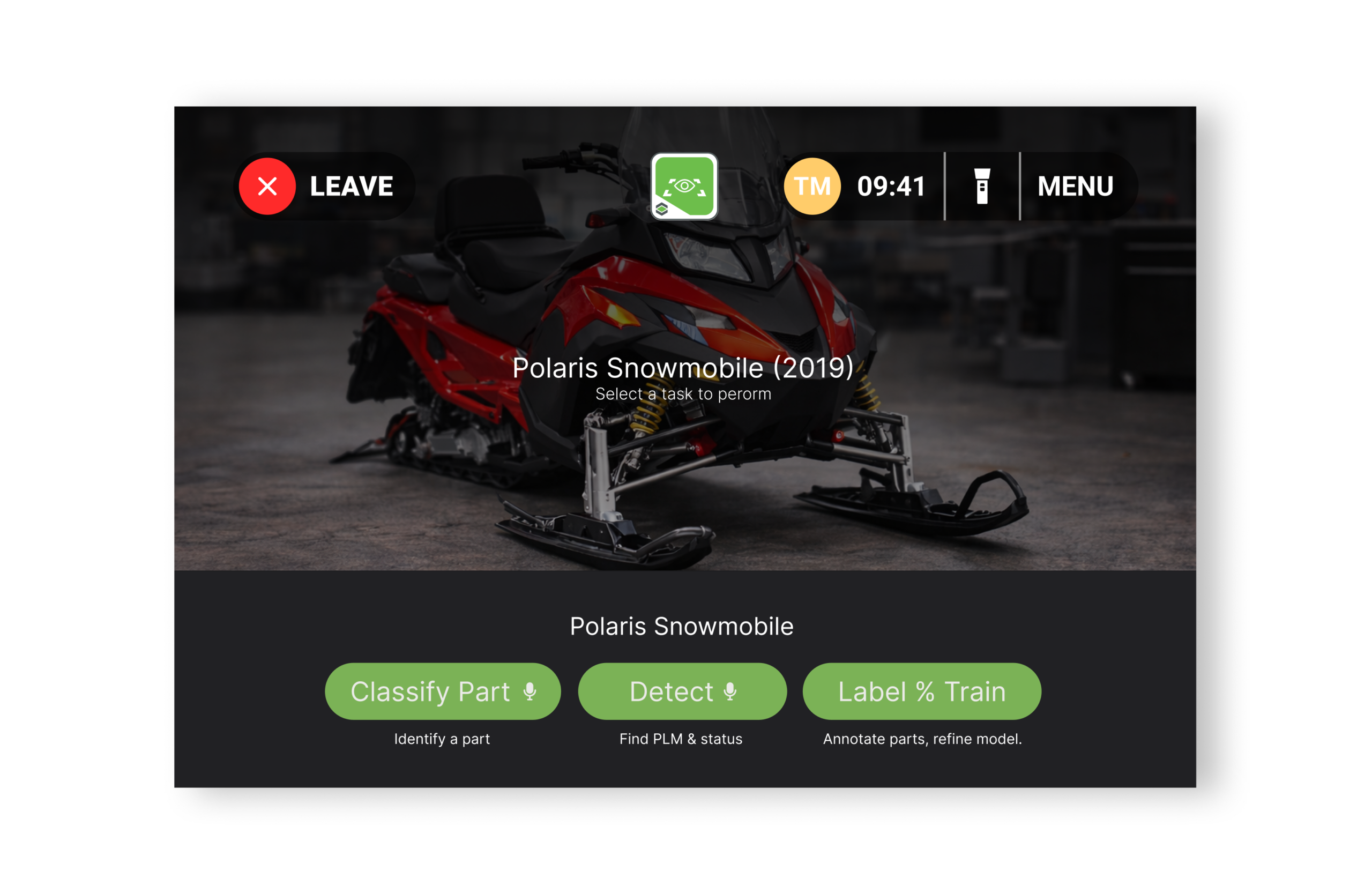

I designed three flows: Classify for Jim (single parts, speed), Detect for Anna (multiple assets, scale), Label & Train for Hannah (system improvement). James was excluded. His work is infrastructure, not interaction.

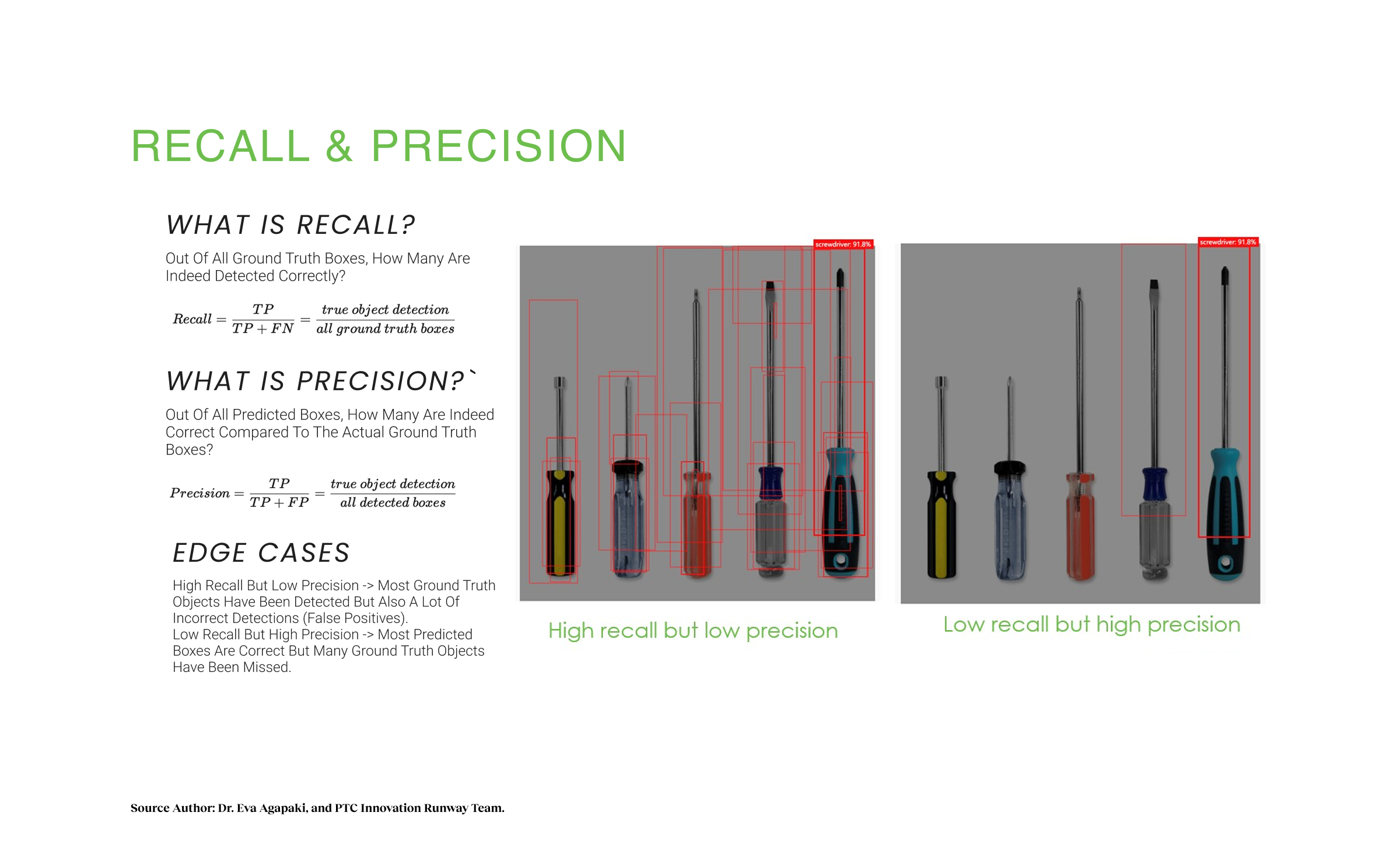

- Goal: Trust through Precision. Technicians don’t trust tools that guess; false positives destroy confidence. We aimed for >90% precision to minimize abandonment and ensure trust for critical repairs.

- Goal: Velocity through Context. Manual searches take 15-20 minutes, bleeding efficiency and increasing downtime. We targeted reducing “Time to First Decision” to under 60 seconds by automating the link between visual input and Windchill data.

- Goal: Viability through Scale. Creating training data for millions of parts is operationally impossible. We validated a “Zero-Shot” pipeline using synthetic CAD training to yield field-ready models, measuring success by reduced onboarding time for enterprise clients.

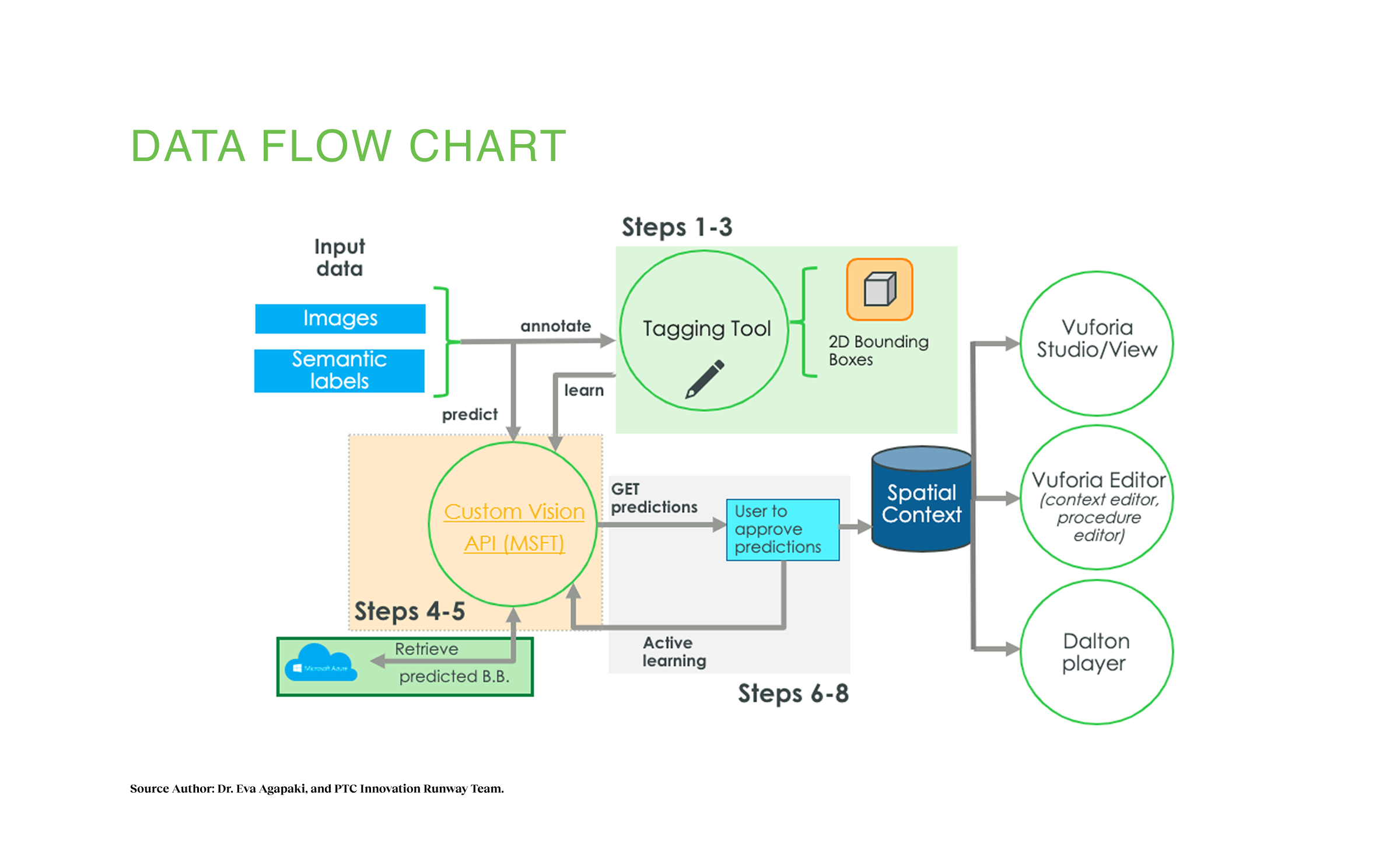

We first evaluated manual annotation where clients would tag their own databases. We rejected this immediately. It shifted burden to customers. Instead, we committed to Automated RTOD using our synthetic pipeline to assign class names without client intervention, solving the adoption barrier.

Constraint-Driven Design:

- Insight: The AI needs good data, but technicians aren’t photographers.

- Design Response: I created Quality Indicators in the camera view. Visual guides that turn green only when lighting and stability are sufficient, training users to capture better input.

- Insight: RealWear users experience high cognitive load.

- Design Response: I stripped away 80% of mobile’s information, showing only Part Name and Confirm button to reduce eye strain and cognitive overhead.

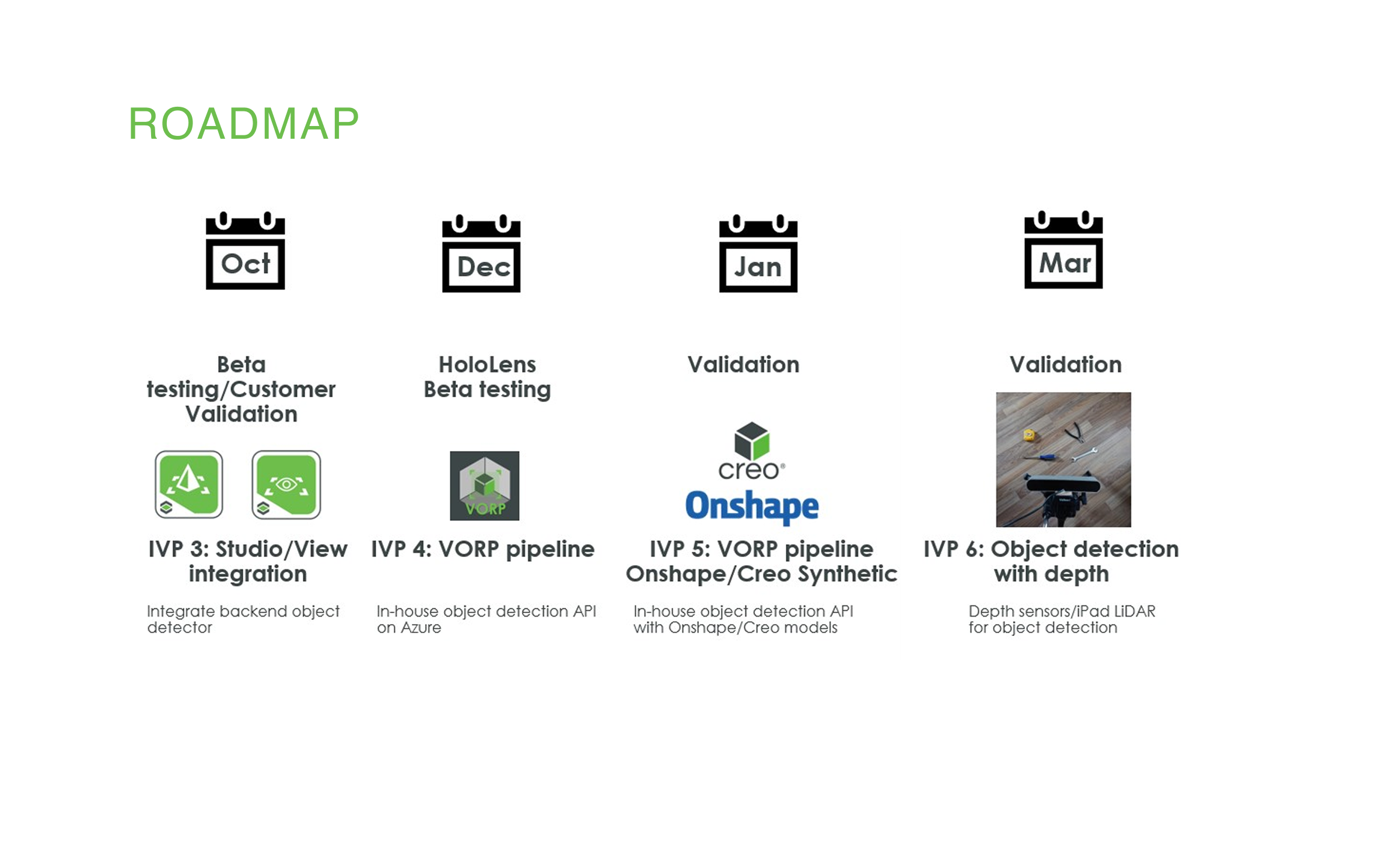

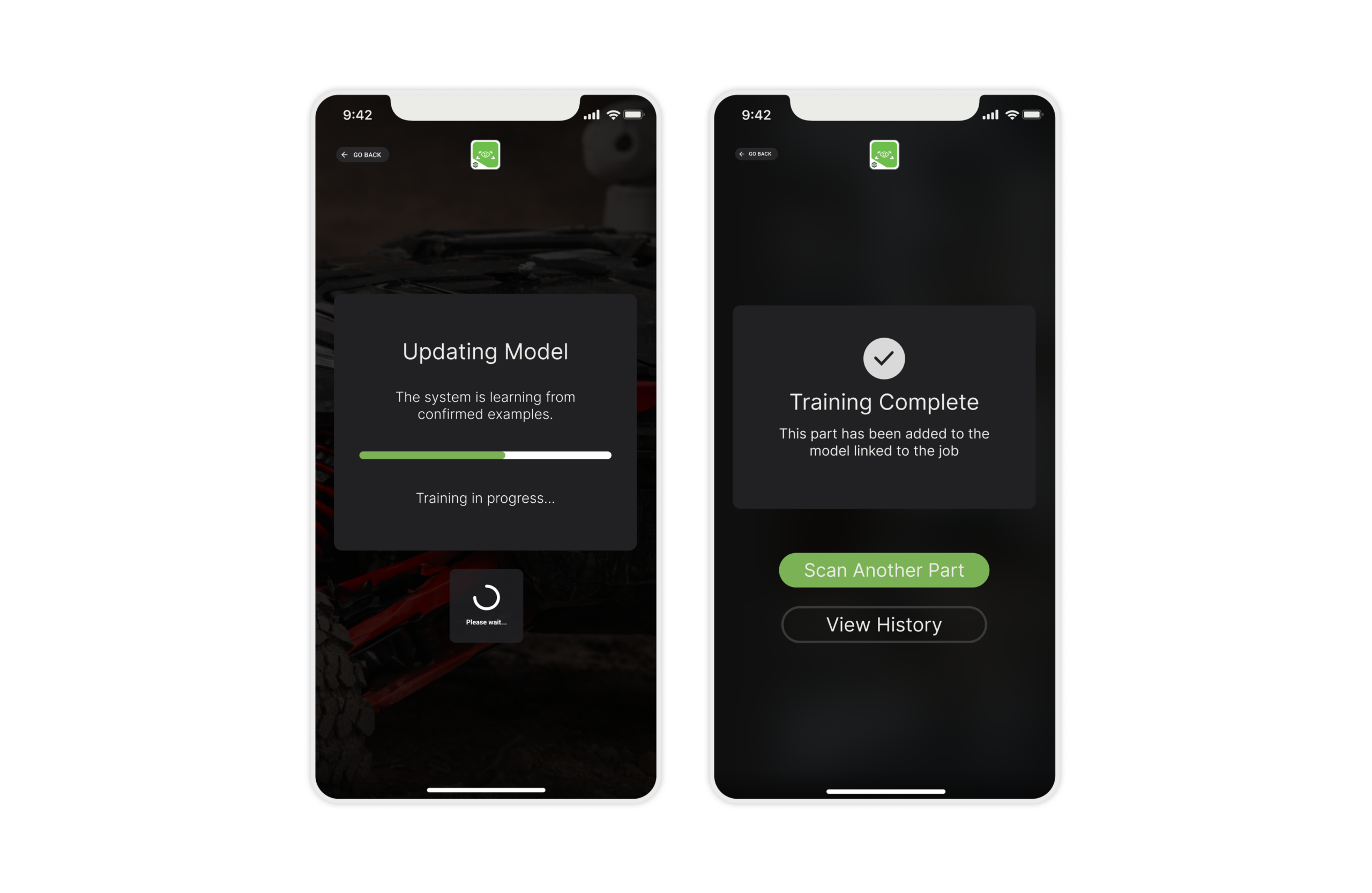

We prototyped using a “hub and spoke” model on Azure Kubernetes Service. We followed a strict Innovation Value Proposition (IVP) roadmap, moving from IVP 3 (Studio Integration) in October to IVP 4 (HoloLens Beta) in December, validating mobile before tackling headsets.

- Iteration 1 (Mobile): We tested continuous scanning mode. It drained the battery in 15 minutes.

- Pivot: I redesigned the interaction to “Target & Tap” (user manually triggers scan), which saved battery and gave users control.

- Collaboration: I worked with engineers to map confidence scores (0.8932) into a 3-tier UI: Green (High Confidence), Yellow (Needs Review), Red (Try Again).

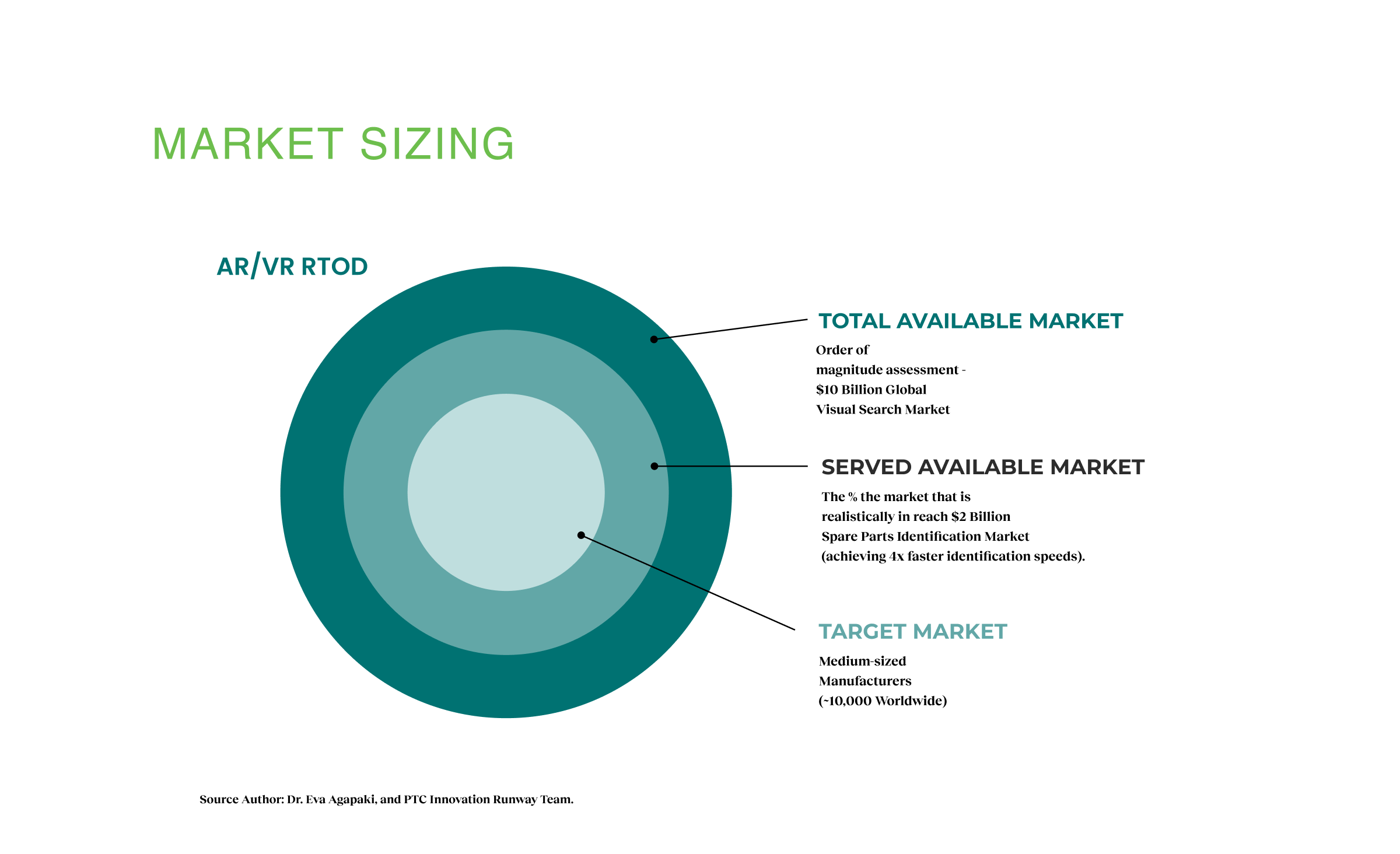

Industrial workers and factory equipment visuals demonstrate real-time AI-powered part identification capabilities across Mobile and RealWear platforms, disrupting the $10 billion manual lookup market. Three flows (Classify, Detect, Label & Train) generated $20M ARR across 30,000 enterprise customers while reducing inspection errors by 30%.

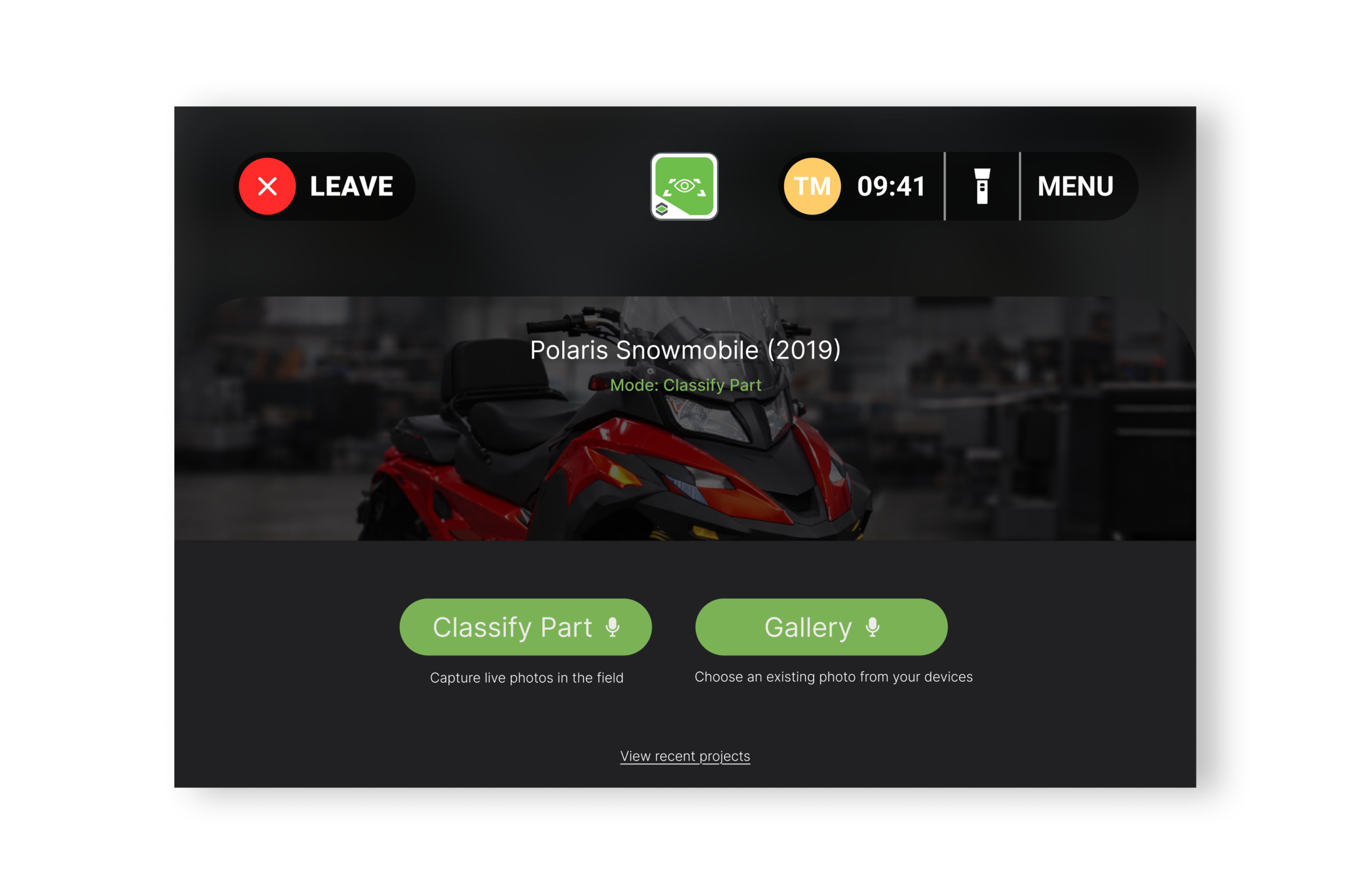

Core Experience Flows

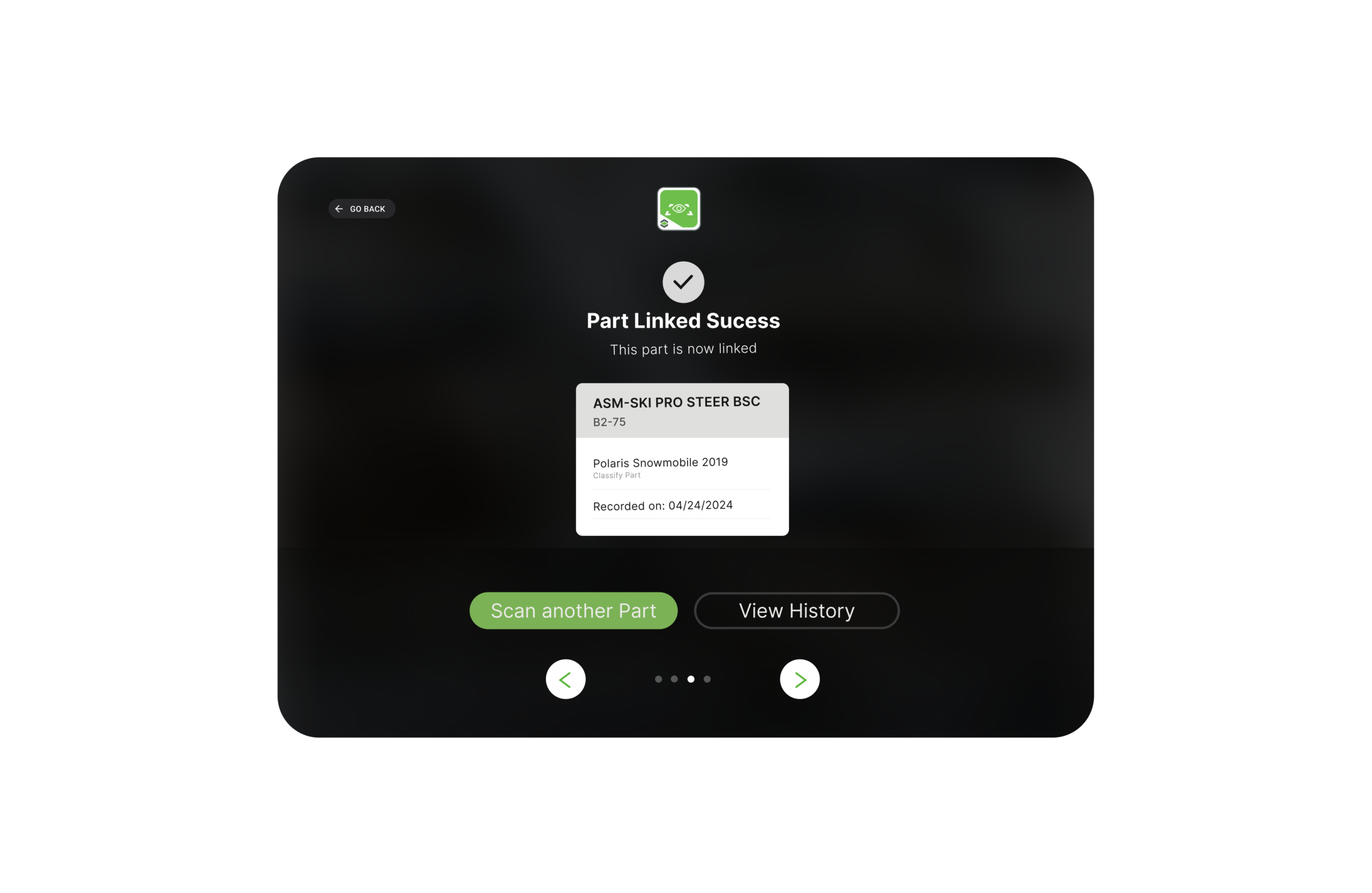

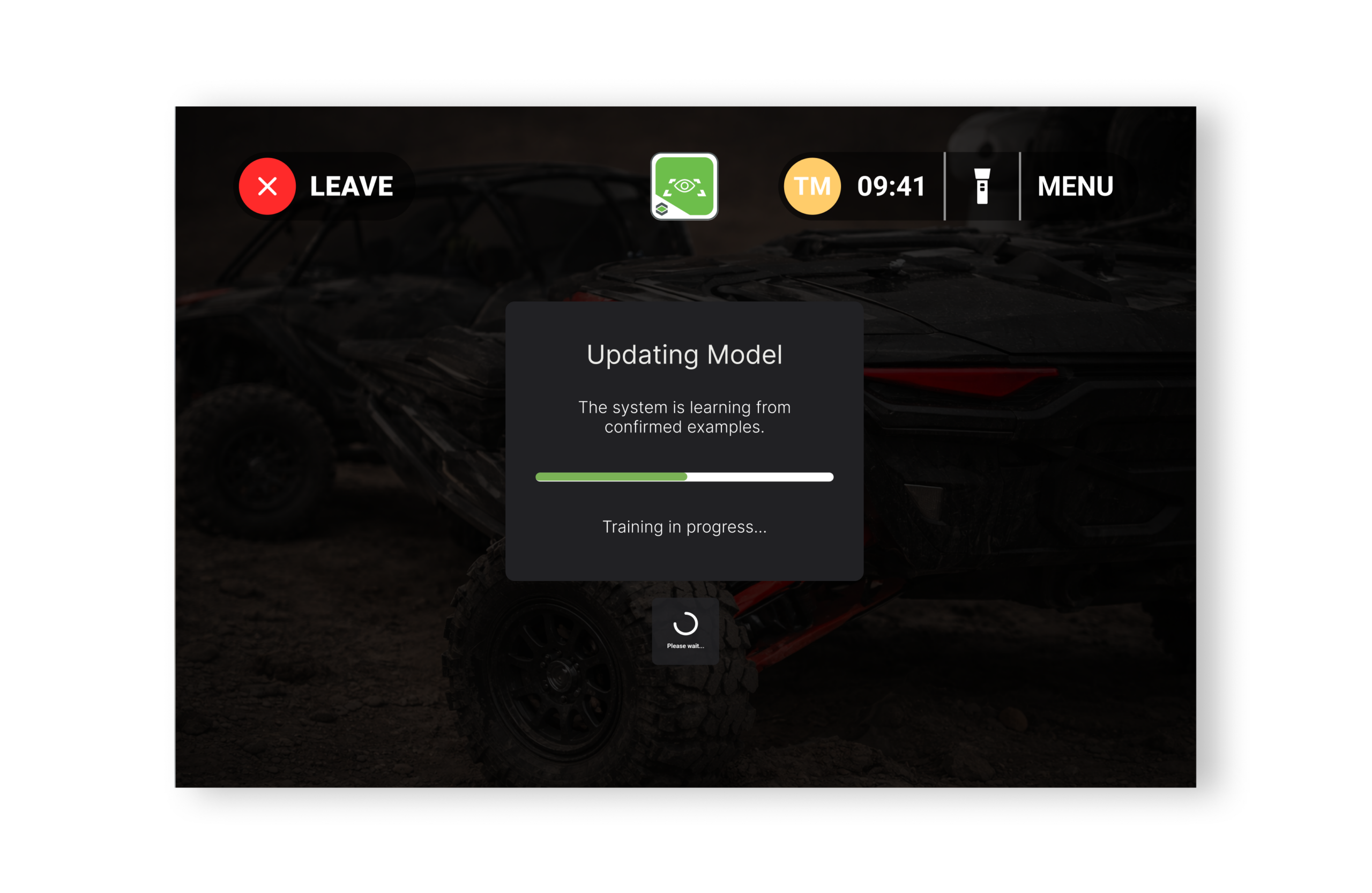

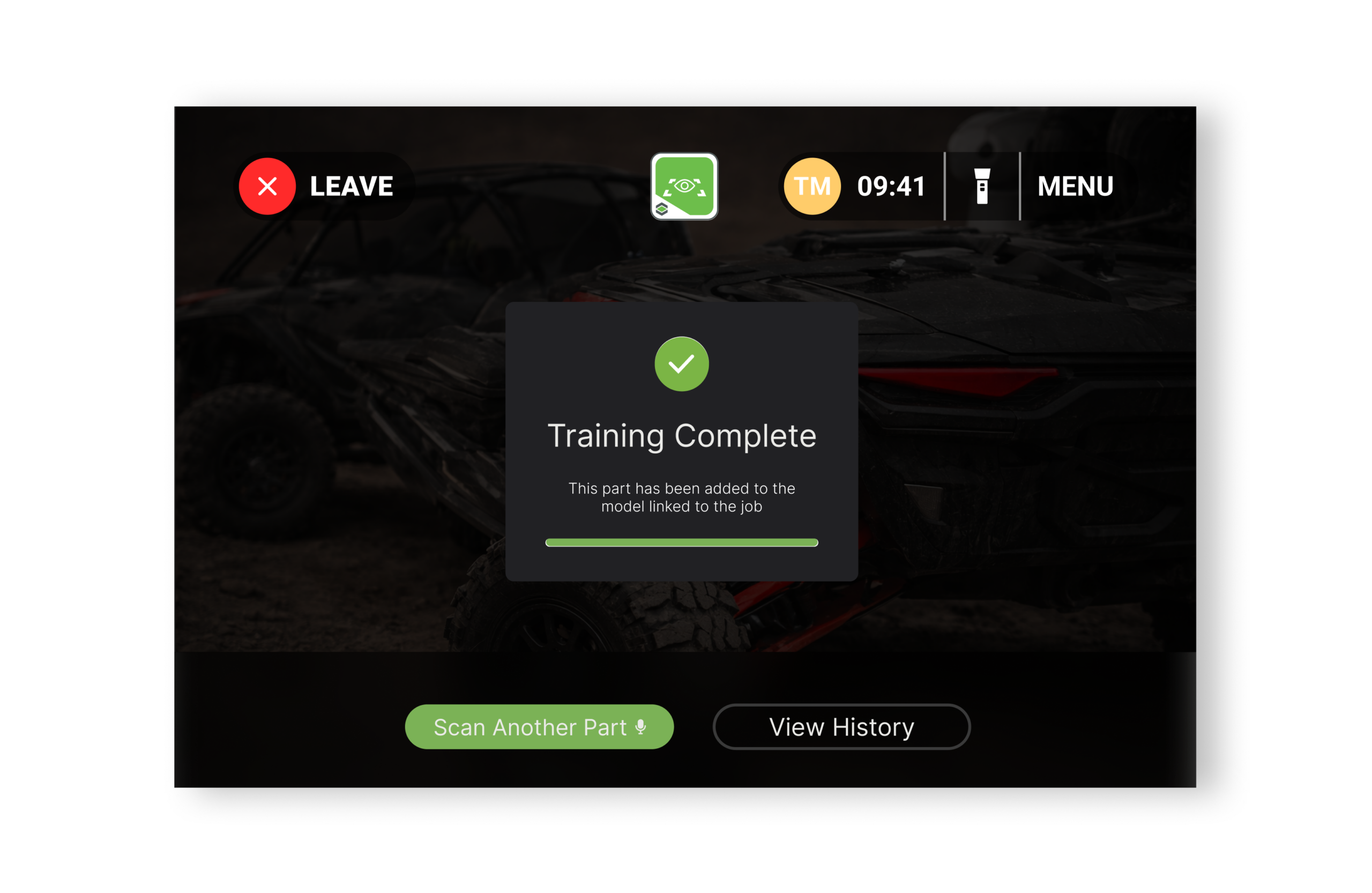

Three distinct flows answer different questions: Classify (What is this single part?), Detect (Where are all parts?), Label & Train (How does the system improve?). Together they form a learning loop where better data creates trust, and better trust creates adoption, compounding value over time.

Context filtering, capture, and verification appear across Mobile and RealWear. Classify serves technicians identifying single parts. Detect enables operators scanning at scale. Label & Train improves the system. Each flow resolves ambiguity in extreme conditions, turning probabilistic output into actionable, trusted decisions.

Faster Part Recognition

Part identification improved from 15-20 min to 10-12 min.

Error Reduction

Fewer inspection mistakes through AI-assisted verification.

ARR

Pilot contracts with Porsche, German automotive OEMs, and IKEA, generating significant revenue across.

Reflections & Impact

The AR Handbook bridged the gap between complex AI research and practical tools that technicians could rely on in tough factory conditions. After six years in research labs, we shipped a system that hit 90% accuracy in real factories. This proved that training AI on synthetic CAD data could actually work at scale.

Testing with Porsche, German automotive manufacturers, and IKEA showed a 30-40% reduction in how long it took technicians to identify parts, cutting lookup time from 15-20 minutes down to 10-12 minutes. At the same time, inspection errors dropped by 30%. The AI didn’t just help technicians work faster. It helped them work better.

The real breakthrough wasn’t just technical. It was organizational. By building a working prototype and testing it in actual factories, we secured $1.7M in funding and grew to a 17-person team across six countries. Success with major manufacturers led to adoption across 30,000 enterprise customers, showing the company that design-driven AI was worth investing in, not just a research project.

.

Next Steps

- Scale information architecture by researching dynamic taxonomies and adaptive filtering patterns to maintain sub-30-second identification as libraries grow from 200 to 2,000+ parts across enterprise portfolios.

- Design offline states that calibrate trust when edge-based inference operates at lower accuracy, expanding addressable market to disconnected industrial environments while maintaining user confidence.

- Optimize voice interaction through on-site studies in 90dB+ environments, testing multimodal patterns (gaze selection combined with voice confirmation) to reduce command failure below 5%.

- Close the learning loop by designing single-tap error correction that automatically queues rejected predictions for model improvement, accelerating accuracy gains from 3-month to 1-month cycles.